1 Getting Ready for Regression Cooking!

Learning Objectives

By the end of this chapter, you will be able to:

- Define the three core pillars to be applied in regression modelling throughout this book: a data science workflow, the right workflow flavour, and the most appropriate model.

- Outline how the ML-Stats dictionary works to bridge the terminology used in machine learning (ML) and statistics.

- Contrast the differences and similarities between supervised learning and regression analysis.

- Explain how the data science workflow can be applied in regression analysis.

- Describe how the mind map of regression analysis acts as the primary chapter structure of this book and as a toolboox.

1.1 Let the Cooking Begin!

Regression is one of the most widely used tools in statistics, data science, and scientific research because it helps us describe relationships, quantify uncertainty, and make predictions from data. Whether we want to understand how house prices change with square footage, assess whether a policy intervention has an effect, or forecast future outcomes for new observations, regression gives us a principled framework for connecting questions, data, models, and decisions. In this chapter, we will lay the foundation for that framework by introducing the core ideas, vocabulary, and workflow that will guide the rest of the book.

Firstly, we will introduce the foundations of regression analysis within a unified statistical and machine learning perspective. We also begin by connecting key terminology through a machine learning and statistics dictionary, followed by a conceptual overview of supervised learning and regression analysis. Then, we walk through a regression workflow used across this book, highlighting how inferential and predictive inquiries differ in goals, assumptions, and evaluation strategies. The chapter is organized as follows:

- Section 1.2 explains the ML-Stats Dictionary (ML stands for Machine Learning), which establishes common language between statistics and machine learning.

- Section 1.3 introduces the fundamentals of supervised learning and regression analysis. It clarifies what regression is trying to learn, estimate, explain, and predict.

- Section 1.4 sets up the data science workflow, which has an eight-stage structure used throughout the textbook.

- Section 1.5 ilustrates the mind map of regression analysis, which previews the broader family of regression models covered in the cookbook.

That said, we want to highlight one guiding principle for all of our work:

Different modelling estimation techniques in regression analysis become easier to understand once we develop a strong probabilistic and inferential grasp of populations or systems of interest.

The above guiding principle rests on foundational statistical ideas on how data is generated and how it can be modelled through various regression methods. We will explore these underlying concepts in Chapter 2. Before doing so, however, this chapter will build on the following three core pillars:

- Implementing a structured data science workflow as outlined in Section 1.4.

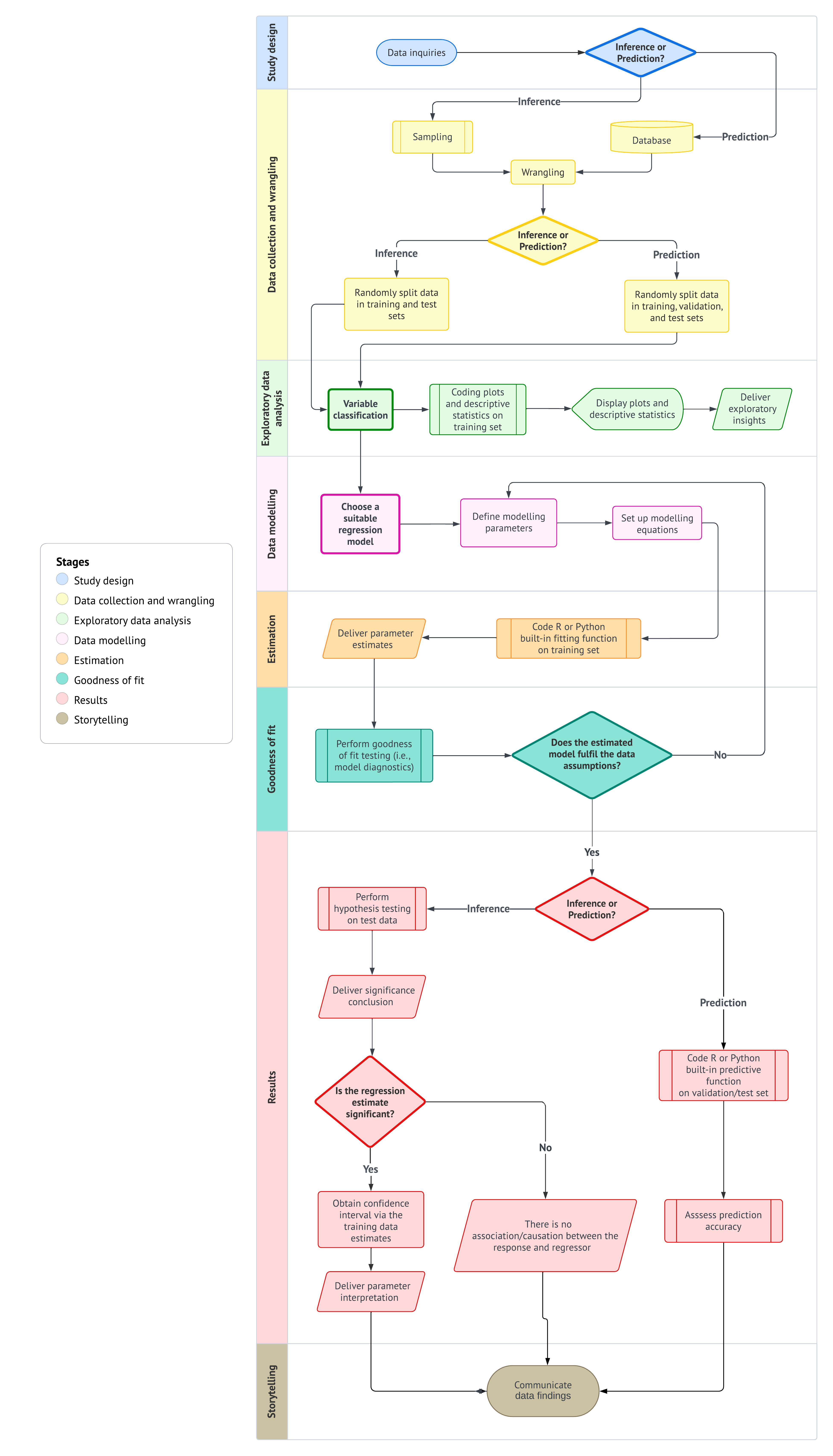

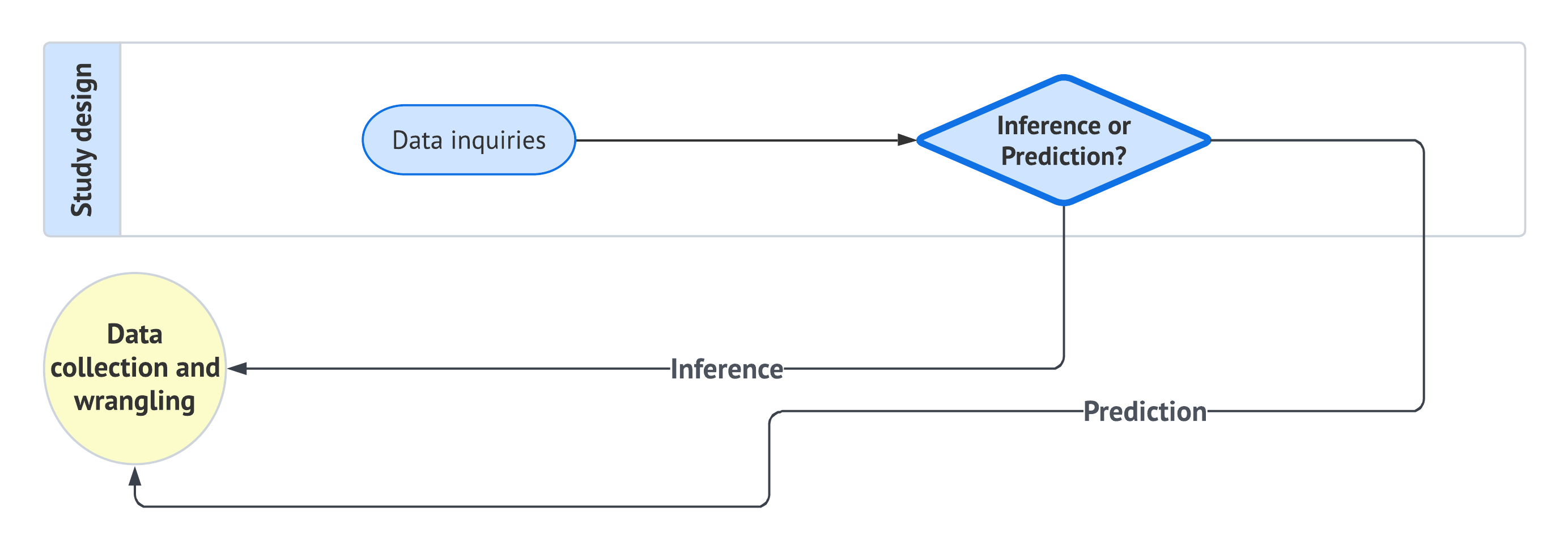

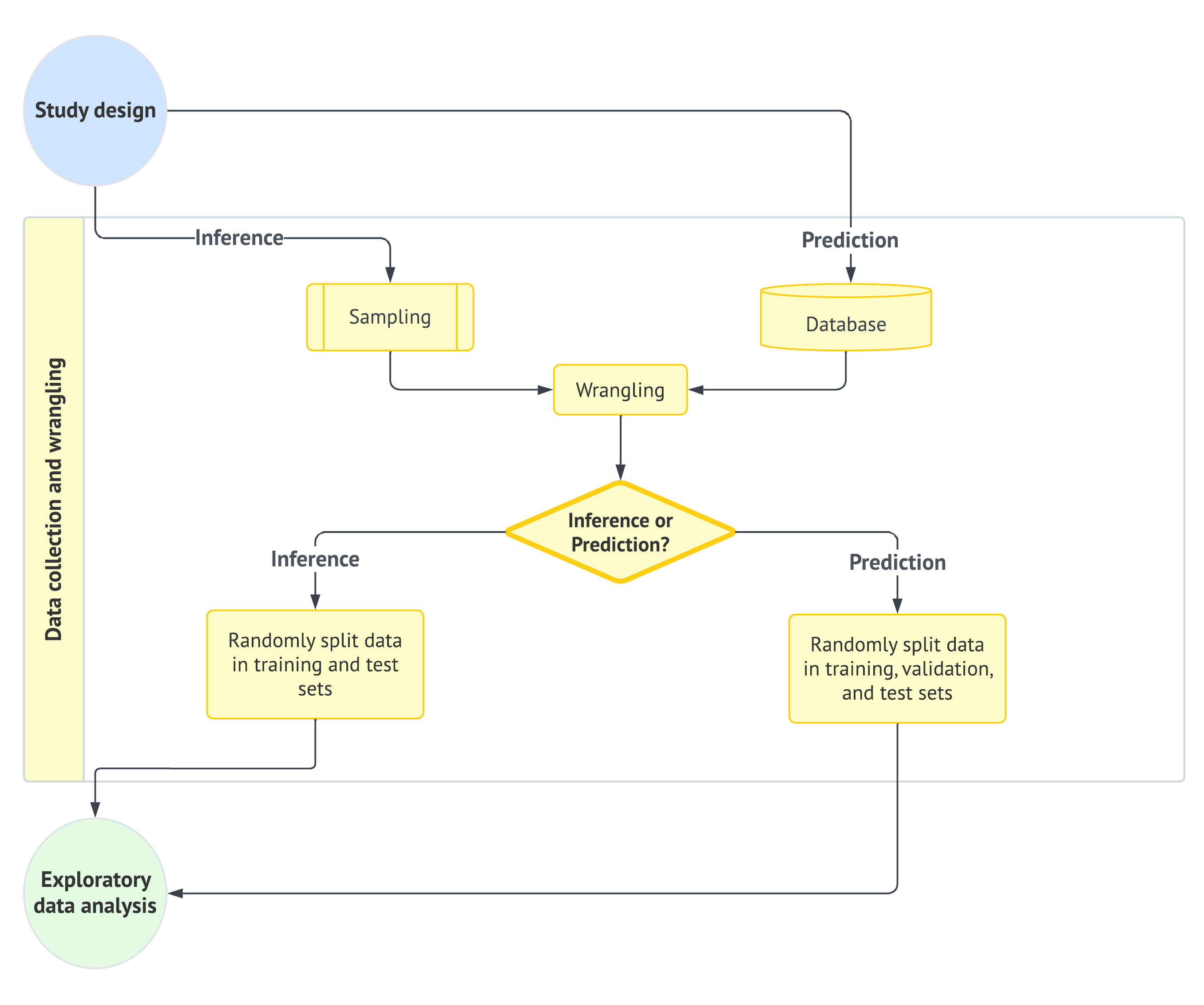

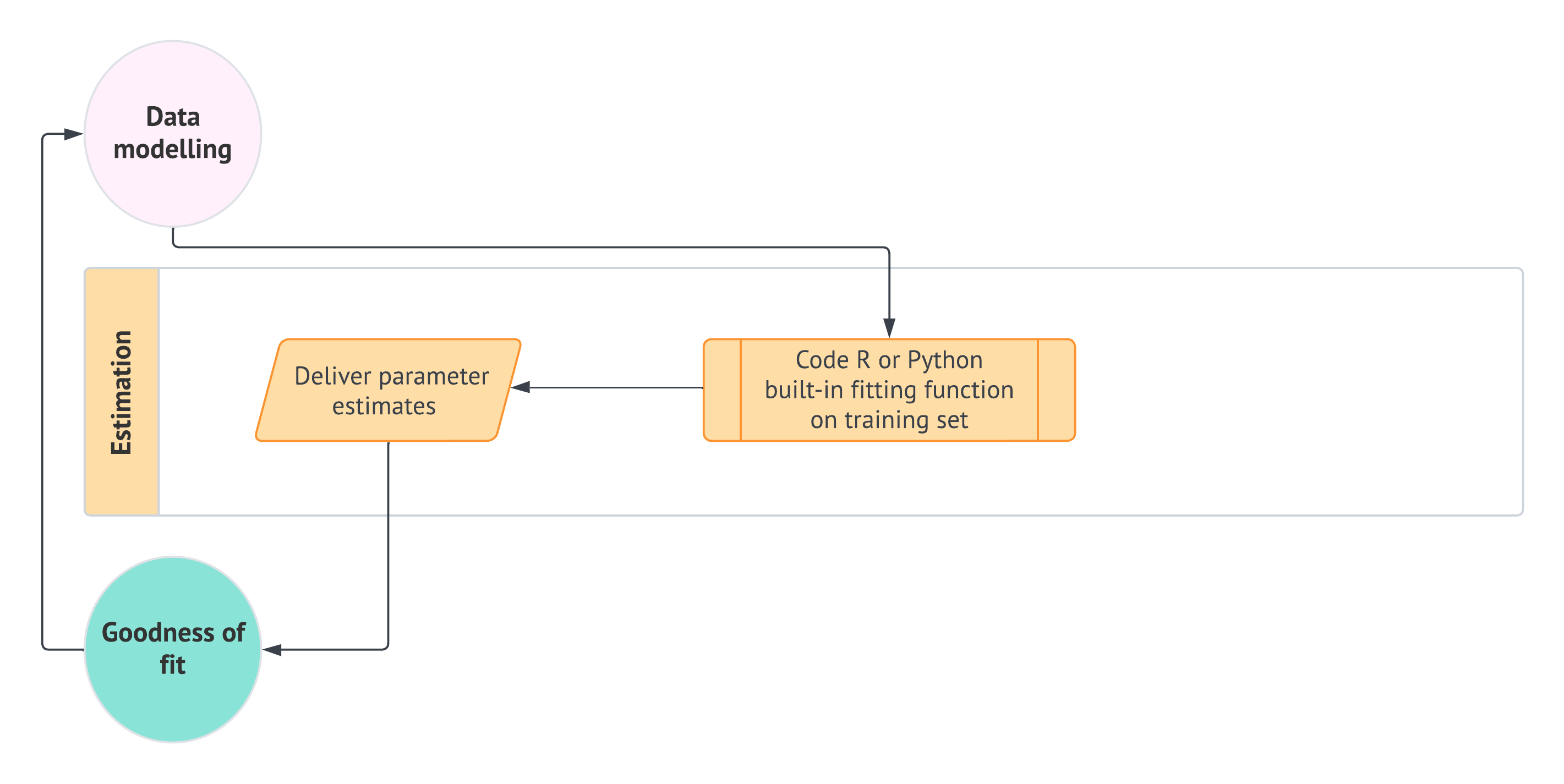

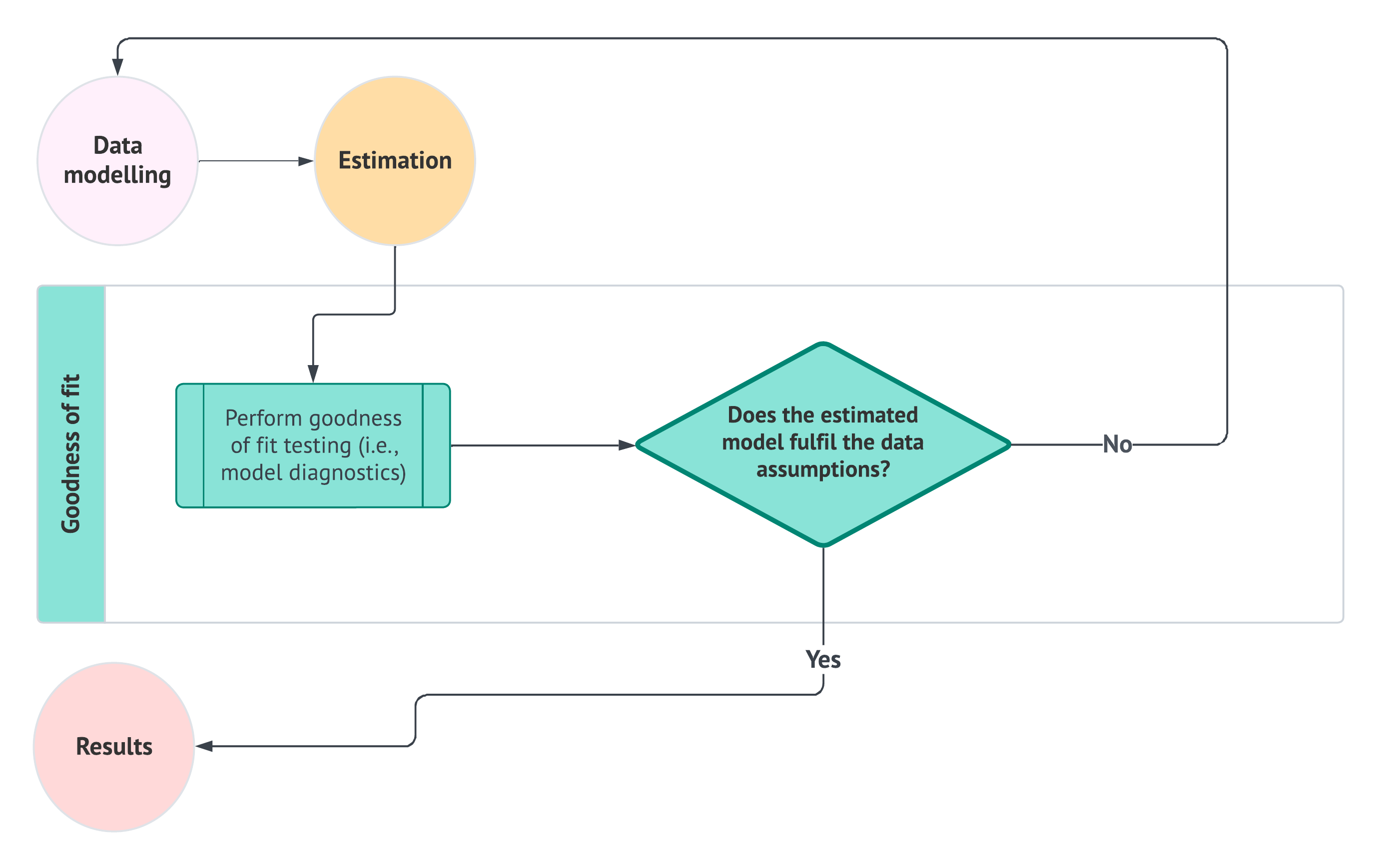

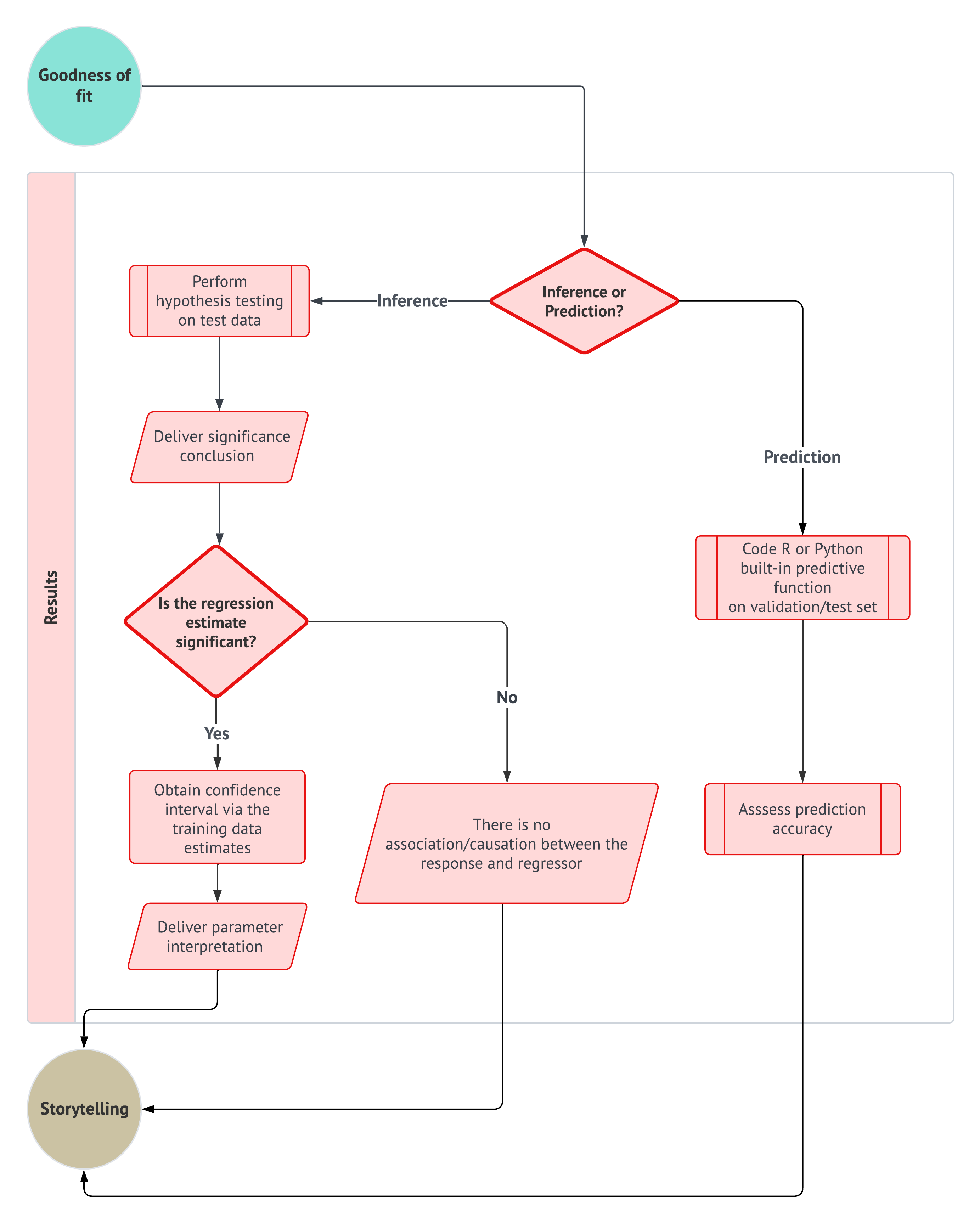

- Selecting the appropriate workflow approach based on an inferential or predictive paradigm, as shown in Figure 1.1.

- Choosing the appropriate regression model based on the response variable or outcome of interest, using the mind map in Section 1.5 (analogous to a regression toolbox).

Note that a running housing example is used in this chapter to ground these ideas in practice. Along the way, we introduce key components of model estimation, goodness-of-fit, and interpretation, including a conceptual discussion of training, validation, and test sets for predictive workflows. At the same time, the main worked examples in this chapter and in the model-specific chapters that follow will primarily rely on training and test sets, since our focus is not on model selection across competing modelling specifications.

The rationale behind the three pillars

Each data science problem involving regression analysis has unique characteristics, depending on if the inquiry is inferential or predictive. Different types of outcomes (or response variables) require distinct modelling approaches. For example, we might analyze survival times (e.g., the time until one particular equipment of a given brand fails), categorical outcomes (e.g., a preferred musical genre in the Canadian young population), or count-based outcomes (e.g., how many customers we would expect on a regular Monday morning in the branches of a major national bank), etc. Moreover, under this regression context, our analysis extends beyond the outcome itself, but we also examine how it relates to other explanatory variables (the so-called features). For instance, if we are studying musical genre preferences among young Canadians, we could explore how age groups influence these preferences or compare genre popularity across provinces.

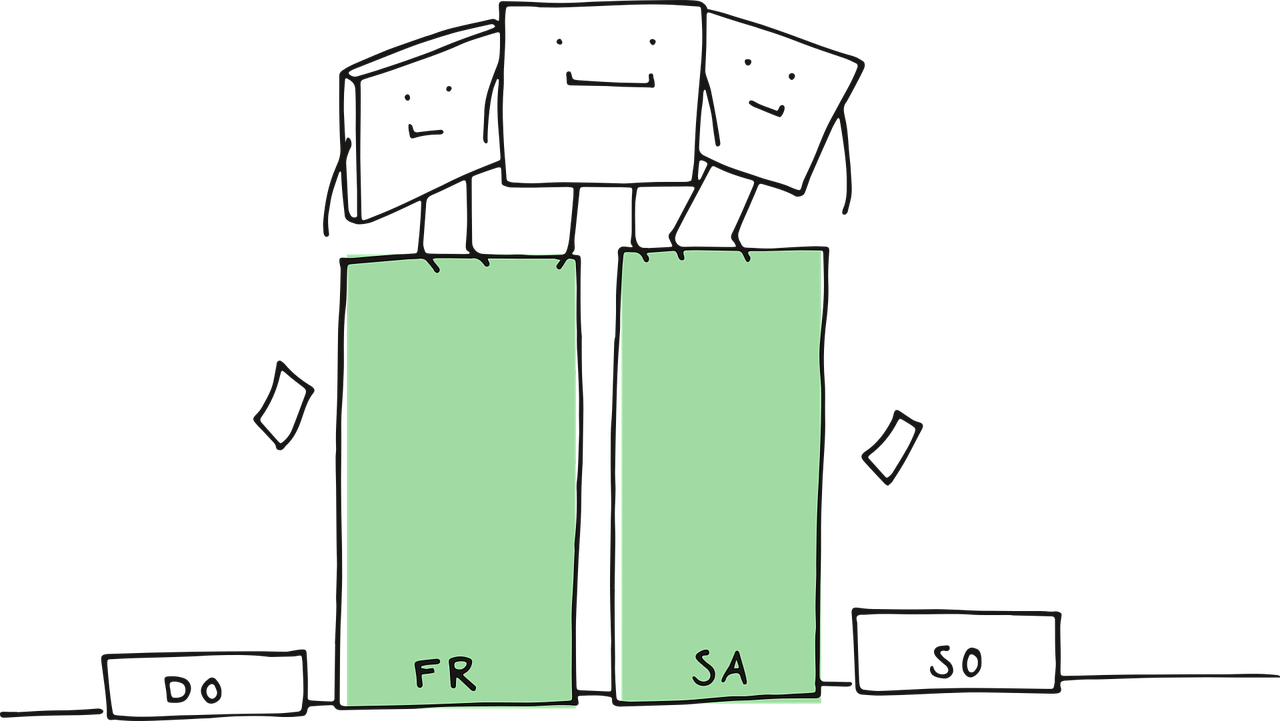

At first glance, it might seem that every regression problem should have a unique workflow tailored to its specific model. However, this is not entirely the case. In Figure 1.1, we introduce a structured regression workflow designed as a proof of concept for thirteen different regression models. Each flow is covered in a separate chapter of this book alongside a review of probability and statistics (i.e, thirteen chapters in this book aside from the probability and statistics review). This workflow standardizes our approach, making analysis more transparent and efficient while allowing us to communicate insights effectively through data storytelling. Naturally, this workflow includes decision points that determine whether the approach follows an inferential or predictive path (the second pillar). As for our third pillar, this comes into play at the data modelling stage, where the regression toolbox in Figure 1.18 guides our modelling choice based on the response variable type.

Let us establish a convention for using admonitions throughout this textbook. These admonitions will help distinguish between key concepts, important insights, and supplementary material, ensuring clarity as we explore different regression techniques. We will start using these admonitions in Section 1.2.

Definition

A formal statistical and/or machine learning definition. This admonition aims to untangle the significant amount of jargon and concepts that both fields have. When applicable, alternative terminology will be included to highlight equivalent terms across statistics and machine learning.

Heads-up!

An idea (or ideas) related to a modelling approach, a specific workflow stage, or an important data science concept. This admonition is used to flag crucial statistical or machine learning topics that warrant deeper exploration.

Tip

An idea (or ideas) that may extend beyond the immediate discussion but provides additional context or helpful background. When applicable, references to further reading will be provided.

The core idea of the above admonition arrangement is to allow the reader to discern between ideas or concepts that are key to grasp from those whose understanding might not be highly essential (but still interesting to check out in further literature). With this structure in place, we can now introduce another key resource: a common ground between machine learning and statistics which will be elaborated on in the next section.

1.2 The ML-Stats Dictionary

Machine learning and statistics often overlap, especially in regression modelling. Topics covered in a regression-focused course, under a purely statistical framework, can also appear in machine learning-based courses on supervised learning, but the terminology can differ. Recognizing this overlap, the Master of Data Science (MDS) program at the University of British Columbia (UBC) provides the MDS Stat-ML dictionary (Gelbart 2017) under the following premises:

This document is intended to help students navigate the large amount of jargon, terminology, and acronyms encountered in the MDS program and beyond.

This section covers terms that have different meanings in different contexts, specifically statistics vs. machine learning (ML).

Both disciplines have a tremendous amount of jargon and terminology. As mentioned in the Preface, machine learning and statistics construct a substantial synergy reflected in data science. Despite this overlap, misunderstandings can still happen due to differences in terminology. To prevent this, we need clear bridges between these disciplines via a ML-Stats dictionary (ML stands for Machine Learning).

Heads-up on how the ML-Stats Dictionary is built and structured!

The complete ML-Stats dictionary can be found in Appendix A. This resource builds upon the concepts introduced in the definition callout box throughout the fifteen main chapters of this textbook. The dictionary aims to clarify terminology that varies between statistics and machine learning, specifically in the context of supervised learning and regression analysis.

Terms in this dictionary related to statistics will be highlighted in blue, while terms related to machine learning will be highlighted in magenta. This color scheme is designed to help readers easily navigate between the two disciplines. With practice, you will become proficient in applying concepts from both fields.

The above appendix will be the section in this book where the reader can find all those statistical and machine learning-related terms in alphabetical order. Notable terms (either statistical or machine learning-related) will include an admonition identifying which terms (again, either statistical or machine learning-related) are equivalent or somewhat equivalent (or even not equivalent if that is the case). For instance, consider the statistical term called dependent variable:

In supervised learning, it is the main variable of interest we are trying to learn or predict, or equivalently, in a statistical inference framework, the variable we are trying explain.

Then, the above definition will be followed by this admonition:

Equivalent to:

Response variable, outcome, output or target.

Note that we have identified four equivalent terms for the term dependent variable. Furthermore, these terms can be statistical or machine learning-related.

Heads-up on the use of terminology!

Throughout this book, we will use specific concepts interchangeably while explaining different regression methods. If confusion arises, you must always check definitions and equivalences (or non-equivalences) in Appendix A.

Next, we will introduce the three main foundations of this textbook: a data science workflow, choosing the correct workflow flavour (inferential or predictive), and building your regression toolbox.

1.3 Supervised Learning and Regression Analysis

Regression analysis is one of the central tools used in statistics, data science, and scientific research to study how an outcome (or response) changes with one or more recorded characteristics. In practice, we often want to answer questions such as:

How does house price vary with square footage and number of bedrooms?

How is a health outcome associated with age and treatment status?

Can we predict a future response for a new observational unit using information already collected on similar units?

The above questions all belong to a broader learning setting in which we use labelled data to learn from past observations and extend that learning to new ones. Hence, a helpful way to organize the ideas is to move from the broadest framework to the more specifically statistical one. At the broadest level, supervised learning refers to the setting in which we observe a response together with one or more explanatory variables and use those labelled observations to learn a relationship between them.

Within supervised learning setting, regression analysis provides a statistical framework for studying such relationships. That said, what makes the statistical perspective especially rich is that it does not only seek a useful predictive rule, but also introduces a probability model to describe how the response varies, conditionally on the explanatory variables, under uncertainty. This probabilistic layer is what allows regression analysis to support not only prediction, but also effect estimation, uncertainty quantification, and formal inferential reasoning. With this progression in mind, let us now define these foundational concepts more carefully.

Definition of supervised learning

Supervised learning is a data modelling framework in which we use observed pairs \((\mathbf{x}_i, y_i)\) for the \(i\)th observation, where \(\mathbf{x}_i\)1 denotes the explanatory variables recorded for that observation, to learn a rule or function that maps explanatory variables to a response variable. The goal may be primarily predictive (accurate future predictions), primarily inferential (understanding associations or effects between \(\mathbf{x}_i\) and \(y_i\)), or a mixture of both.

Definition of response variable

A response variable, also called a dependent variable, endogenous variable, outcome, output, or target, is the measurement or quantity of main interest in a supervised learning or regression analysis problem. It is the variable whose behaviour we aim to explain, model, or predict using one or more explanatory variables.

Depending on the application, the response variable may represent a continuous quantity, a count, a binary result, a proportion, or another type of measurement. The nature of the response variable plays a fundamental role in determining the appropriate regression model and probability model for the analysis.

Definition of explanatory variable

An explanatory variable, also called a regressor, independent variable, predictor, feature, attribute, input, covariate, or, in some contexts, an exogenous variable, is a variable used to help explain, describe, or predict the behaviour of a response variable in a regression model. Explanatory variables represent the observed characteristics or conditions associated with each observational unit.

Depending on the context, explanatory variables may be quantitative or categorical, and their role may be primarily explanatory, predictive, or both.

Now that we have introduced the basic ingredients of supervised learning, we can describe where regression analysis enters more specifically. Not every supervised learning method is statistical in the same sense, but regression analysis is distinguished by the fact that it connects the observed data to an explicit modelling framework. In particular, regression methods do not merely produce predictions; they also provide a structured way to interpret relationships, estimate unknown quantities, and assess uncertainty under modelling assumptions.

Definition of regression analysis

Regression analysis is a collection of statistical methods used to study the relationship between a response variable and one or more explanatory variables. Its goals may include explaining associations, estimating effects, quantifying uncertainty, or predicting future outcomes.

More broadly, regression analysis provides a principled framework for connecting a scientific or practical question to data, a probability model, an estimation method, and an interpretation of results. The specific form of the regression model depends on the nature of the response variable, the modelling assumptions, and whether the main objective is inference, prediction, or both.

A central characteristic of regression analysis is therefore the use of a probability model. This is where the statistical perspective becomes more explicit. Rather than only asking for a rule that maps explanatory variables to a predicted response, we also ask how the response varies under uncertainty, even after accounting for the explanatory variables. This idea will become especially important in Chapter 2, where we revisit random variables and probability distributions in greater detail.

Definition of probability model

A probability model is a mathematical representation of how a random variable behaves under uncertainty in a population or system of interest. It specifies the possible values the random variable can take together with a distribution that describes how probable those values are, usually through one or more governing parameter(s).

In this textbook, this idea aligns with the notion of a generative model (to be discussed in Chapter 2), where we use a probability distribution to describe how the observed data could have been generated from the population or system under study.

All the the above definitions allow us to better understand how supervised learning and regression analysis come together in practice. Note that, in a regression problem, we observe a response variable together with one or more explanatory variables for each observational unit, and we seek to learn how the response behaves conditionally on those explanatory variables. In a statistical regression framework, this relationship is represented through a probability model, while in a supervised learning framework, it is often viewed as learning a rule that maps inputs to outputs.

To make this more concrete, suppose that for the \(i\)th observational unit we observe \(k\) explanatory variables collected in a vector \(\mathbf{x}_i\) together with a response value \(Y_i\). In regression analysis, we are interested in understanding or approximating the conditional behaviour of the response given the explanatory variables. Taking this further in an ordinary least-squares (OLS) framework for instance, a regression model to be fully elaborated in Chapter 3, a common way to express this relationship is through:

\[ Y_i = \beta_0 + \beta_1 x_{i,1} + \cdots + \beta_k x_{i,k} + \varepsilon_i, \tag{1.1}\]

where the terms \(\beta_0, \beta_1, \dots, \beta_k\) describe the systematic part of the relationship, and the random error term \(\varepsilon_i\) represents the remaining variation in the response not explained by the explanatory variables. From a supervised learning perspective, the same model can be viewed as a rule that produces fitted values or predictions from observed inputs. From a statistical perspective, however, this same expression also encodes assumptions about uncertainty, model structure, and how the response varies conditionally on the explanatory variables.

Heads-up on the role of mathematical notation!

At this stage, the notation in Equation 1.1 is only meant to illustrate the general structure of a regression model. Later in this section and throughout the textbook, we will elaborate more carefully on how explanatory variables are represented, how regression models are constructed, and how their statistical and predictive interpretations may differ depending on the context.

Now, the previous discussion also highlights an important point: regression analysis can serve different, though often overlapping, purposes. In some settings, our primary goal is inferential, meaning that we want to understand associations, estimate effects, or quantify uncertainty about how the response is related to the explanatory variables. In other settings, our primary goal is predictive, meaning that we want to obtain accurate predictions for future or unseen observational units. These two perspectives are closely connected, but they are not identical. A model that is attractive for interpretation is not always the best model for prediction, and a model that predicts well is not automatically the most useful one for formal inference.

Heads-up on inferential versus predictive regression!

Throughout this textbook, we will distinguish between inferential and predictive workflow flavours:

- Inferential regression emphasizes trustworthy interpretation, effect estimation, and uncertainty quantification under modelling assumptions.

- Predictive regression emphasizes out-of-sample performance and the ability of a model to generalize to new observations.

The above distinction will become especially important when we discuss model assessment, diagnostics, and data partitioning.

One of the most practical consequences of adopting a supervised learning perspective is the need to think carefully about how the observed random sample is used throughout the modelling process. As we will discuss in Chapter 2, regression analysis and supervised learning begin with a population or system of interest from which we observe a random sample of labelled observations. For the \(i\)th observational unit, such a labelled observation can be written as \((\mathbf{x}_i, y_i)\), where \(\mathbf{x}_i\) contains the \(k\) explanatory variables and \(y_i\) is the corresponding observed response value.

Once this observed random sample has been collected and wrangled, it is often useful to divide it into subsets that play different roles during model building and assessment. In predictive settings, this partitioning helps us evaluate how well a model generalizes to unseen observations. In inferential settings, a data split can also help us avoid double dipping (to be elaborated further in Section 1.4.3), that is, the problematic use of the same observations both to explore patterns in the data and to formally assess the resulting model-based claims. Although these subsets all come from the same original random sample, they serve different purposes and must not be confused with one another.

Definition of training set

A training set is the subset of the observed random sample used to fit or estimate one or more candidate models. In regression analysis, this means that the training set is used to estimate unknown model terms, such as regression coefficients, and to carry out classical diagnostic checks on the fitted model.

In a supervised learning context, the training set is the portion of the observed random sample from which the model primarily learns the relationship between the explanatory variables and the response variable.

Definition of validation set

A validation set is the subset of the observed random sample used to compare candidate models, modelling strategies, or tuning decisions after those models have been fitted on the training set. Its main role is to assess how well different modelling choices generalize to unseen observations before a final model is selected.

In predictive regression, the validation set is especially useful when comparing alternative model specifications, such as different sets of explanatory variables, transformations, or interaction terms. It is not primarily used for classical residual diagnostics, but rather for model comparison and predictive assessment.

Definition of test set

A test set (or testing set) is the subset of the observed random sample reserved for a final and unbiased assessment of model performance after all model-building decisions have been completed. Its role is to provide a trustworthy evaluation of how well the chosen model is expected to perform on future or unseen observations from the same population or system of interest in predictive settings.

On the other hand, in inferential settings, the test set may serve a protective role against double dipping, since it allows us to reserve part of the observed random sample for a more formal assessment (via hypothesis testing) after exploratory work has been carried out on the training set.

The distinction among these above subsets reflects a broader principle that will appear repeatedly throughout this textbook: a regression analysis is not only about fitting a model, but also about using the observed random sample in a disciplined way relative to the question we want to answer:

- For predictive inquiries, the usual logic is that the training set is used to fit candidate models, the validation set is used to compare modelling choices when needed, and the test set is used once at the end for a final out-of-sample performance check.

- For inferential inquiries, the split between training and testing can help separate exploratory work from more formal model-based conclusions via hypothesis testing, thereby reducing the risk of double dipping.

Heads-up on validation versus diagnostics and on double dipping!

A common source of confusion is to treat validation, testing, and diagnostics as if they were the same thing. They are not, because each serves a different purpose in the modelling process:

- Validation is mainly concerned with generalization, that is, whether one modelling choice predicts unseen data better than another. In predictive regression, the validation set is used to compare candidate models or modelling decisions before selecting a final one.

- Testing is concerned with obtaining a final assessment after the model-building process has been completed. In predictive settings, the test set is used to provide an honest out-of-sample evaluation of the chosen model. On the other hand, in inferential settings, holding back a test set can also help reduce double dipping, where the same observations are used both to discover patterns and to formally assess them.

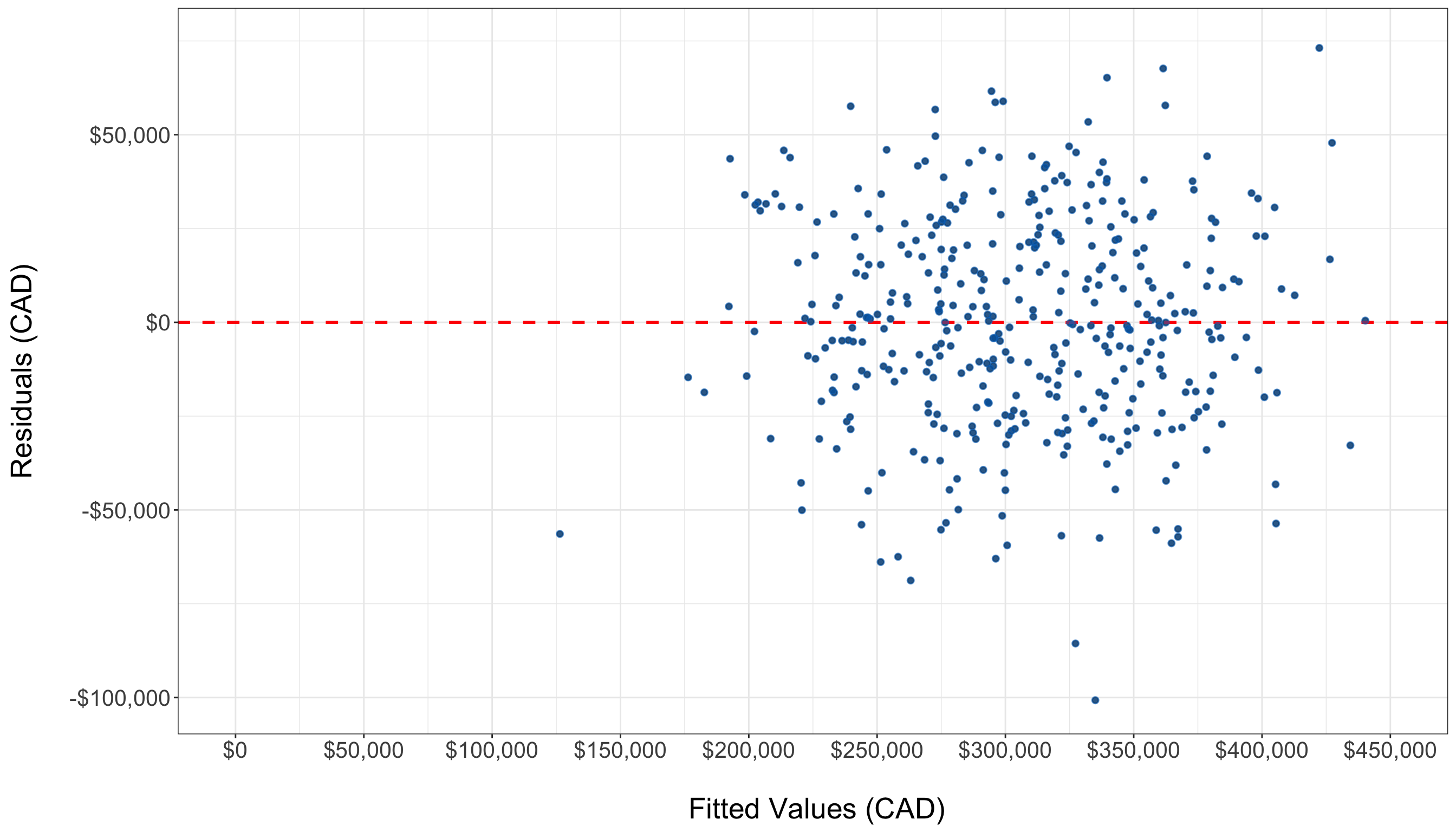

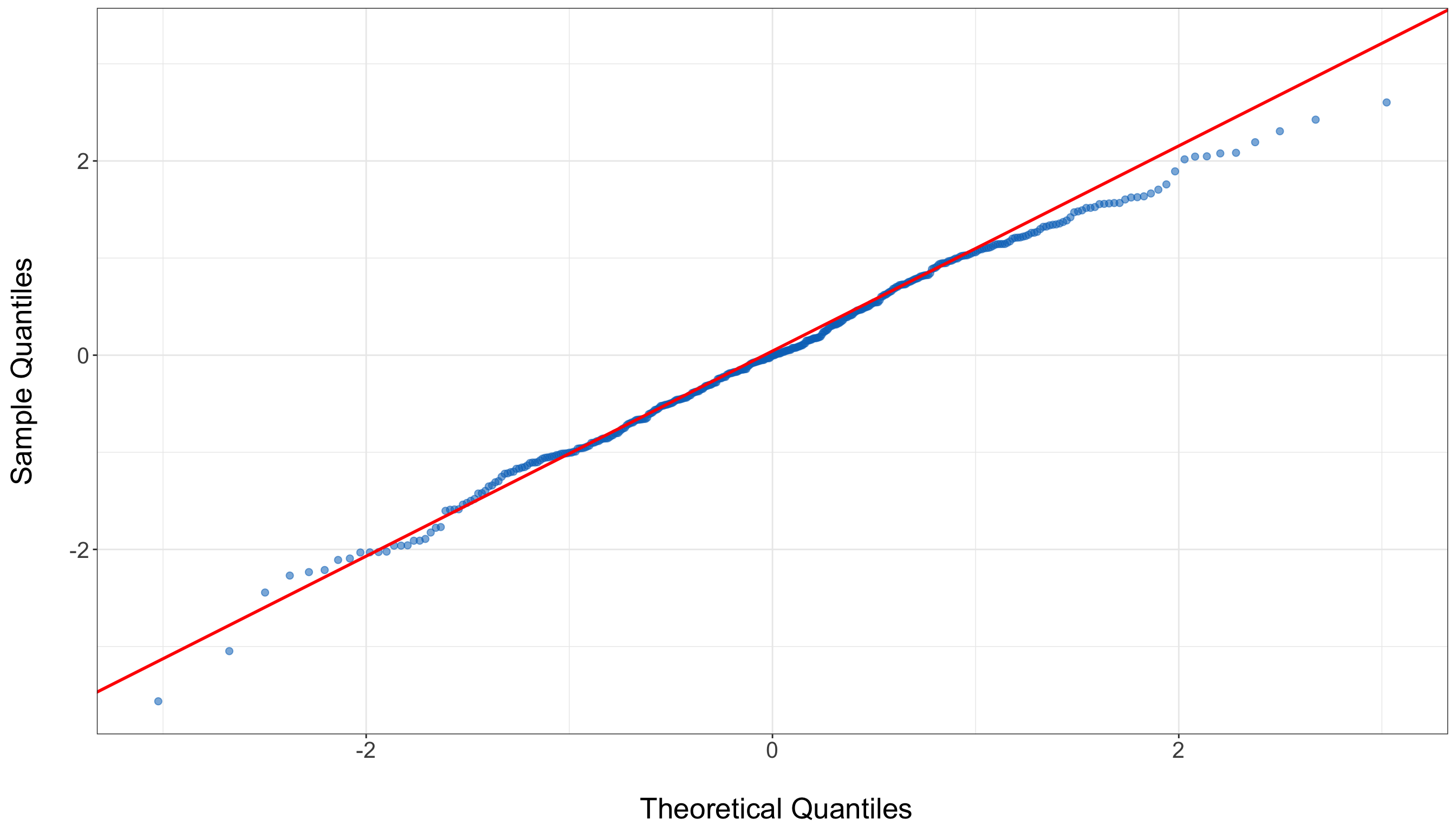

- Diagnostics are concerned with whether a fitted model shows warning signs that its assumptions or structure may be inadequate. For instance, in OLS regression, this includes checks based on the fitted training model, such as residual plots, QQ-plots, and other tools used to assess model adequacy.

Taken together, all these ideas reinforce a key message of this chapter: sound regression work requires more than selecting a model formula. We must also think carefully about the nature of the response, the role of the explanatory variables, the probability model governing uncertainty, the inferential or predictive flavour of the inquiry, and the way in which the observed random sample is allocated across the different stages of the analysis. This need for principled organization is exactly what motivates the next major ingredient of this textbook: The Data Science Workflow.

1.4 The Data Science Workflow

Understanding the data science workflow is essential for mastering regression analysis. This workflow serves as a blueprint that guides us through each stage of our analysis, ensuring that we apply a systematic approach to solving our inquiries in a reproducible way. Each of the three pillars of this textbook (data science workflow, the right workflow flavour (inferential or predictive), and a regression toolbox) are deeply interconnected. Regardless of the regression model we explore, this general workflow provides a consistent framework that helps us navigate our data analysis with clarity and purpose.

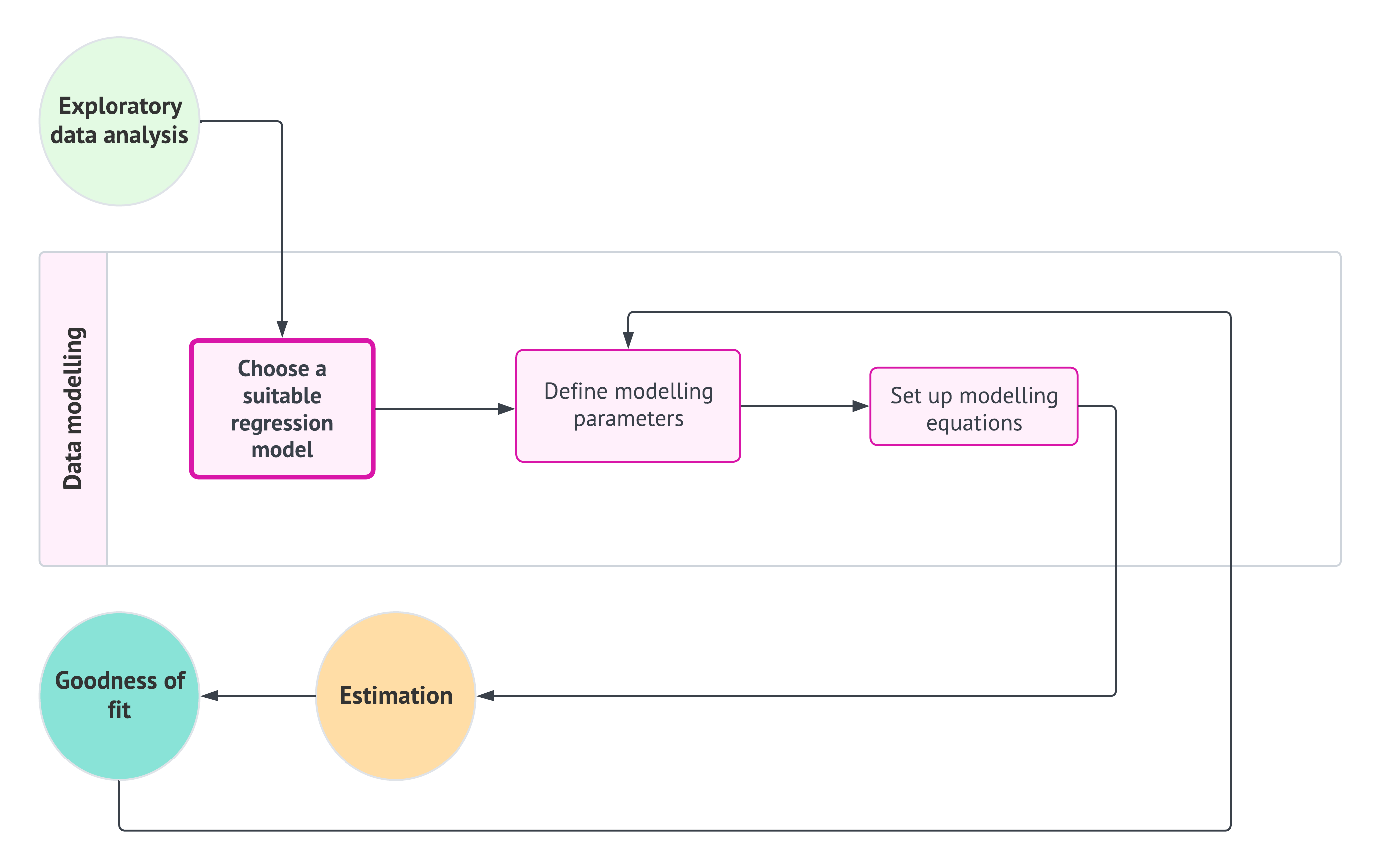

As shown in Figure 1.1, the data science workflow is composed of the following eight stages (each of which will be discussed in more detail in subsequent subsections):

- Study design: Define the research question, objectives, and variables of interest to ensure the analysis is purpose-driven and aligned with the problem at hand.

- Data collection and wrangling: Gather and clean data, addressing issues such as missing values, outliers, and inconsistencies to transform it into a usable format.

- Exploratory data analysis: Explore the data through statistical summaries and visualizations to identify patterns, trends, and potential anomalies.

- Data modelling: Apply statistical or machine learning models to uncover relationships between variables or make predictions based on the data.

- Estimation: Calculate model parameters to quantify relationships between variables and assess the accuracy and reliability of the model.

- Goodness of fit: Evaluate the model’s performance using metrics and model diagnostic checks to determine how well it explains the data.

- Results: Interpret the model’s outputs to derive meaningful insights and provide answers to the original research question.

- Storytelling Communicate the findings through a clear, engaging narrative that is accessible to a non-technical audience.

By adhering to this workflow, we ensure that our regression analysis are not only systematic and thorough but also capable of producing results that are meaningful within the context of the problem we aim to solve.

Heads-up on the importance of a formal structure in regression analysis!

From the earliest stages of learning data analysis, understanding the importance of a structured workflow is crucial. If we do not adhere to a predefined workflow, we risk misinterpreting the data, leading to incorrect conclusions that fail to address the core questions of our analysis. Such missteps can result in outcomes that are not only meaningless but potentially misleading when taken out of the problem’s context.

Therefore, it is essential for aspiring data scientists to internalize this workflow from the very beginning of their education. A systematic approach ensures that each stage of the analysis is conducted with precision, ultimately producing reliable and contextually relevant results.

1.4.1 Study Design

The first stage of this workflow is centred around defining the main statistical inquiries we aim to address throughout the data analysis process. As a data scientist, your primary task is to translate these inquiries from the stakeholders into one of two categories: inferential or predictive. This classification determines the direction of your analysis and the methods you will use:

- Inferential: The objective here is to explore and quantify relationships of association or causation between explanatory variables (commonly referred to as regressors in the models discussed in this textbook) and the response variable within the context of the specific problem at hand. For example, you might statistically seek to determine whether a particular marketing campaign (the regressor) significantly influences sales revenue (the response), and if it does, by how much.

- Predictive: In this case, the focus is on making accurate predictions about the response variable based on future observations of the regressors. Unlike inferential inquiries, where understanding the relationship between variables is key, the primary goal here is to maximize prediction accuracy. This approach is fundamental in machine learning. For instance, you might build a model to predict future sales revenue based on past marketing expenditures, without necessarily needing to understand the underlying relationship between the two.

Heads-up on the inquiry focus of this book!

In the regression chapters of this book, we will emphasize both types of inquiries. As we follow the workflow from Figure 1.1, we will explore the two pathways identified by the decision points concerning inference and prediction.

Example: Housing Sale Prices

To illustrate the study design stage, let us consider an example involving housing sale prices in a specific city. Imagine that the analysis is being prepared for a group of stakeholders that includes municipal planners, real-estate analysts, housing developers, and school-district administrators. Although they are all interested in the same housing market, they may not all want the same kind of answer. Some may want to understand which housing characteristics are associated with higher or lower sale prices, while others may want a model that can generate reasonable price predictions for houses with given features. Let us consider the following:

- If the goal is inferential, we might be interested in understanding the relationship between various factors, such as square footage, number of bedrooms, and proximity to schools, and housing sale prices. This type of inquiry may be especially relevant for stakeholders who want to understand how different housing characteristics are associated with prices once other factors are taken into account. Specifically, we would ask questions like:

How does the number of bedrooms affect the price of a house, once we account for other factors?

- If the goal is predictive, we would focus on estimating a model that can accurately predict the price of a house based on its features (i.e., the characteristics of a given house), regardless of whether we fully understand how each feature contributes to the price. This type of inquiry may be especially relevant for stakeholders who want a practical pricing tool for new houses entering the market. Hence, we would be able to answer questions such as:

What would be the predicted price of a rural house with 3,500 square feet and 3 bedrooms located on a block where the closest school is at 2.5 km?

In both cases, the study design stage involves clearly defining these objectives and determining the appropriate data modelling methods to address them. This stage sets the foundation for all subsequent steps in the data science workflow. After establishing the study design, the next step is data collection and wrangling, as shown in Figure 1.2.

1.4.2 Data Collection and Wrangling

Once we have clearly defined our statistical questions, the next crucial step is to collect the data that will form the basis of our analysis. The way we collect this data is vital because it directly affects the accuracy and reliability of our results:

- For inferential inquiries, we focus on understanding populations or systems that we cannot fully observe. These populations are governed by characteristics (referred to as parameters) that we want to estimate. Because we cannot study every individual in the population or system, we collect a smaller, representative subset called a sample. The method we use to collect this sample (known as sampling) is crucial. A proper sampling method ensures that our sample reflects the larger population or system, allowing us to make accurate and precise generalizations (i.e., inferences) about the entire population or system. After collecting the sample, it is common practice to randomly split the data into training and test sets. This split allows us to build and assess our models, ensuring that the findings are robust and not overly tailored to the specific data at hand.

- For predictive inquiries, our goal is often to use existing data to make predictions about future events or outcomes. In these cases, we usually work with large datasets (databases) that have already been collected. Instead of focusing on whether the data represents a population (as in inferential inquiries), we focus on cleaning and preparing the data so that it can be used to train models that make accurate predictions. Conceptually, after wrangling the data, it may be useful to split it into training, validation, and test sets. However, throughout the main worked examples of this textbook, we will usually rely on training and test sets only, since our emphasis is not on comparing many competing modelling specifications. A fuller workflow with an explicit validation set is deferred to ?sec-validation-workflow.

Tip on sampling techniques!

Careful attention to sampling design is a crucial step in any research aimed at supporting valid regression-based inference. The selection of an appropriate sampling design should be guided by the structural characteristics of the population as well as the specific goals of the analysis. A well-designed sampling strategy enhances the accuracy, precision, and generalizability of parameter estimates derived from regression models, particularly when the intention is to extend model-based conclusions beyond the observed data to the whole population or system.

Below, we summarize some commonly used probability-based sampling designs, each of which has distinct implications for model validity and estimation efficiency:

- Simple random sampling: Every unit in the population has an equal probability of selection. While this method is straightforward to implement and analyze, it may be inefficient or impractical for populations with heterogeneous subgroups.

- Systematic sampling: Sampling occurs at fixed intervals from an ordered list, starting from a randomly chosen point. This design can improve efficiency under certain ordering schemes, but caution is necessary to avoid biases related to periodicity.

- Stratified sampling: The population is divided into mutually exclusive strata based on key characteristics (e.g., age, income, region, etc.). Samples are drawn within each stratum, often in proportion to the strata sizes or based on optimal allocation. This approach increases precision for subgroup estimates and enhances overall model efficiency.

- Cluster sampling: The population is divided into naturally occurring clusters (e.g., households, schools, geographic units, etc.), and entire clusters are sampled randomly. This design is often preferred for cost efficiency, but it typically requires adjustments for intracluster correlation during analysis.

In the context of our regression-based inferential framework, it is necessary to carefully plan data collection and preparation around the sampling strategy. The choice of sampling design can influence not only model estimation but also the interpretation and generalizability of the results. While this textbook does not provide an exhaustive treatment of sampling theory, we recommend Lohr (2021) for an in-depth reference. Their work offers both theoretical insights and applied examples that are highly relevant for data scientists engaged in model-based inference.

Example: Collecting Data for Housing Inference and Predictions

Let us continue with our housing example to illustrate the above concepts:

- Inferential Approach: Suppose we want to understand how the number of bedrooms is associated with the housing sale prices in a city. To do this, we would collect a sample of house sales that accurately represents the city’s entire housing market. For instance, we might use stratified sampling to ensure that we include houses from different neighbourhoods in proportion to how common they are. After collecting the data, we would split it into training and test sets. The training set helps us build our model and estimate the relationship between variables, while the test set allows us to evaluate how well our findings generalize to new data.

- Predictive Approach: If our goal is to predict the selling price of a house based on its features (such as size, number of bedrooms, and location), we would gather a large dataset of recent house sales. This data might come from a real estate database that tracks the details of each sale. Before we can use this data to train a model, we would clean it by filling in any missing information, converting data to a consistent format, and making sure all variables are ready for analysis. In a more general supervised learning workflow, we might then split the data into training, validation, and test sets. However, throughout this textbook, our main predictive examples will usually work with training and test sets only, since the emphasis is not on fine-tuning among several competing model specifications.

As shown in Figure 1.3, the data collection and wrangling stage is fundamental to the workflow. It directly follows the study design and sets the stage for exploratory data analysis.

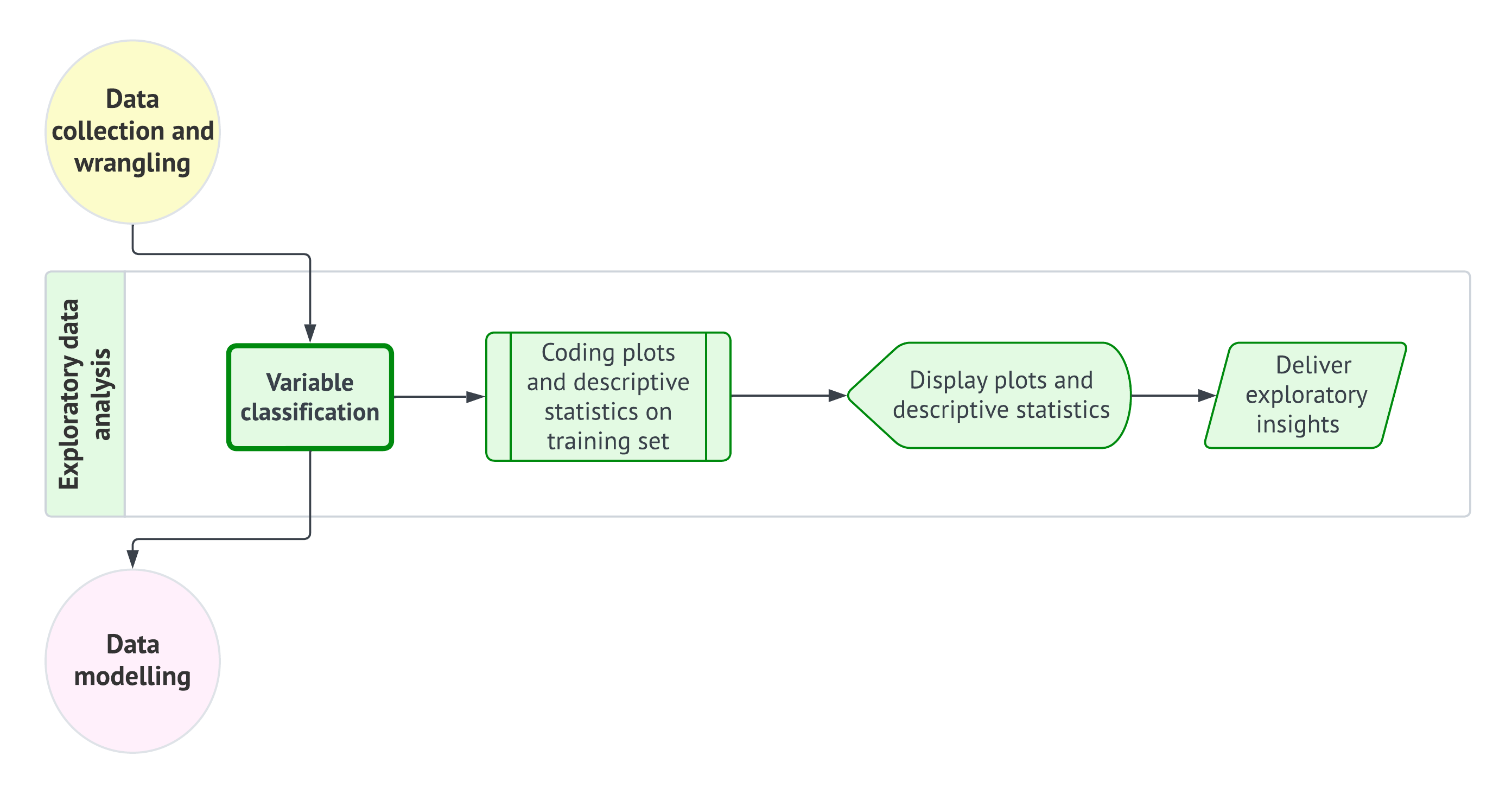

1.4.3 Exploratory Data Analysis

Before diving into data modelling, it is crucial to develop a deep understanding of the relationships between the variables in our training data. This is where the third stage of the data science workflow comes into play: exploratory data analysis (EDA). EDA serves as a vital process that allows us to visualize and summarize our data, uncover patterns, detect anomalies, and test key assumptions that will inform our modelling decisions. Our structured approach to EDA is visually summarized in Figure 1.4, which shows the sequential steps from variable classification to the delivery of exploratory insights.

As indicated above, the first step in EDA is to classify our variables according to their types. This classification is essential because it guides our choice of analysis techniques and models. Specifically, we need to determine whether each variable is discrete or continuous, and whether it has any specific characteristics such as being bounded or unbounded.

-

Response (i.e., the \(Y\)):

- Determine if the response variable is discrete (e.g., binary, count-based, categorical) or continuous.

- If it is continuous, let us consider whether it is bounded (e.g., percentages that range between \(0\) and \(100\)) or unbounded (e.g., a variable like company profits/losses that can take on a wide range of values).

-

Regressors (i.e., the \(x\)s):

- For each regressor, we must identify whether it is discrete or continuous.

- If a regressor is discrete, let us classify it further as binary, count-based, or categorical.

- If a regressor is continuous, let us determine whether it is bounded or unbounded.

This classification scheme helps us select the appropriate visualization and statistical methods for our analysis, as different variable types often need different approaches. It ensures that we are well-equipped to make the right choices in our analyses.

After classifying your variables, the next step is to create visualizations and calculate descriptive statistics using our training data. This involves coding plots that can reveal the underlying distribution of each variable and the relationships between them. For instance, we might create histograms to visualize distributions, scatter plots to explore relationships between continuous variables, and box plots to compare discrete and categorical variables against a continuous variable.

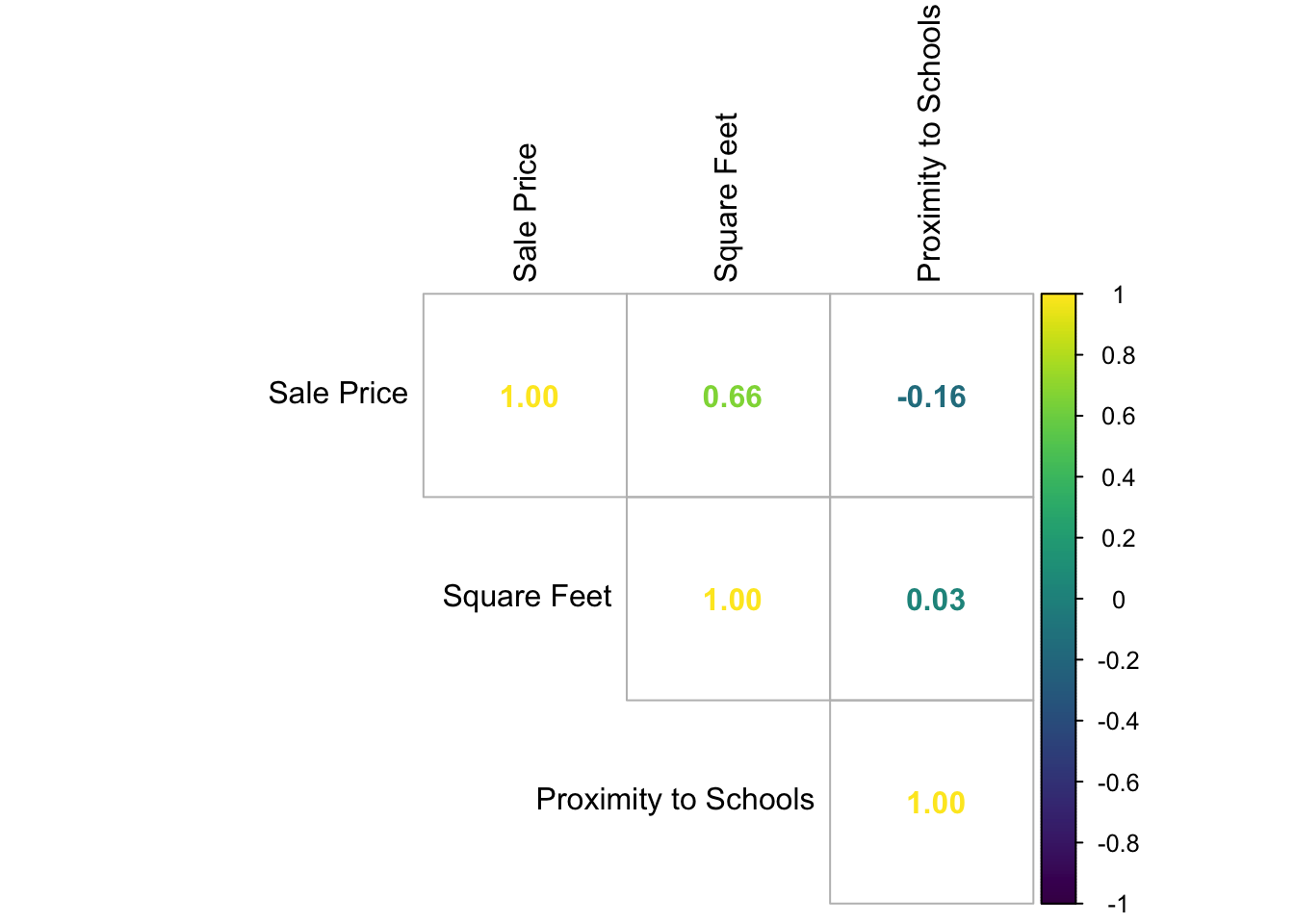

Alongside these visualizations, it is important to calculate key descriptive statistics such as the mean, median, and standard deviation if our variables are numeric. These statistics provide a summary of our data, offering insights into central tendency and variability. We might also use a correlation matrix to assess the strength of relationships between continuous variables.

Once we have generated these plots and statistics, they should be displayed in a clear and logical manner. The goal here is to interpret the data and draw preliminary conclusions about the relationships between the observed variables. Presenting these findings effectively helps to uncover key descriptive insights and prepares you for the subsequent modelling stage. Finally, the insights gained from our EDA must be clearly articulated. This involves summarizing the key findings and considering their implications for the next stage of the workflow—data modelling. Observing patterns, correlations, and potential outliers in this stage will inform your modelling approach and ensure that it is grounded in a thorough and informed analysis.

Heads-up on the use of EDA to deliver inferential conclusions!

EDA plays a critical role in uncovering patterns, detecting anomalies, and generating hypotheses. However, it is important to emphasize that the results of EDA should not be generalized beyond the specific sample data being analyzed. EDA is inherently descriptive and focused on the observed sample, and it is not intended to support inferential claims about larger populations. The insights gained from EDA are contingent on the observed sample and may not accurately reflect systematic relationships within the broader population. Nevertheless, EDA can provide valuable information to inform our modelling decisions.

Generalizing findings to a larger population requires formal statistical inference, which takes into account sampling variability, model uncertainty, and the precision of estimates. This is particularly important in regression analysis, where extending patterns observed in a sample to the wider population needs rigorous modelling assumptions, estimation procedures, and a quantification of uncertainty. Treating EDA findings as if they were inferential conclusions can lead to misleading interpretations throughout our data science workflow.

Example: EDA for Housing Data

To illustrate the EDA process, we will follow it within the context of the housing example used in the previous two workflow stages, utilizing simulated data. Suppose we have a sample of \(n = 2,000\) houses drawn from various Canadian cities through cluster sampling. As shown in Table 1.1, our earlier inferential and predictive inquiries focus on housing sale price in CAD as our response variable in a regression context. Note that this numeric response cannot be negative, which classifies it as positively unbounded. Additionally, Table 1.1 provides the relevant details for the regressors in this case: the number of bedrooms, square footage, neighbourhood type, and proximity to schools. Note that we also indicate the coding names of all the variables involved.

| Variable | Type | Scale | Model Role | Coding Name |

|---|---|---|---|---|

| Housing Sale Price (CAD) | Continuous | Positively unbounded | Response | sale_price |

| Number of Bedrooms | Discrete | Count | Regressor | bedrooms |

| Square Footage | Continuous | Positively unbounded | Regressor | sqft |

| Neighbourhood Type (Rural, Suburban or Urban) | Discrete | Categorical | Regressor | neighbourhood |

| Proximity to Schools (km) | Continuous | Positively unbounded | Regressor | school_distance |

Before continuing with this housing example, let us make a quick note on this textbook’s coding delivery.

Heads-up on coding tabs!

You might be wondering:

Where do we begin with some

RorPythoncode?

It is time to introduce our very first lines of code and provide some explanations about the coding approach in this book. Our goal is to make this book “bilingual,” meaning that all hands-on coding practices can be performed in either R or Python. Whenever we present a specific proof of concept or data modelling exercise, you will find two tabs: one for R and another for Python. We will first show the input code, followed by the output.

With this format, you can choose your coding journey based on your language preferences and interests as you progress throughout the book.

Having clarified the bilingual nature of this book with respect to coding, let us load this sample of \(n = 2,000\) houses in both R and Python. For Python, we will need the {pandas} library. Table 1.2 and Table 1.3 show the first 100 rows of this full dataset and R and Python, respectively.

# Importing library

import pandas as pd

# Loading dataset

housing_data = pd.read_csv("data/housing_data.csv")

# Showing the first 100 houses of the full dataset

print(housing_data.head(100))Tip on this simulated housing data!

The housing_data mentioned above is not an actual dataset; it is a simulated one designed to effectively illustrate our data science workflow in this chapter. This simulated dataset will somehow enable us to meet the assumptions of the chosen model during the data modelling stage outlined in Section 1.4.4. If you would like to learn more about this generative modelling process, you can refer to the provided R script.

Now, we will randomly split the sampled data into training and testing sets for both inferential and predictive inquiries. Specifically, 20% of the data will be allocated to the training set, while the remaining 80% will serve as the testing set. Although we formally introduced the idea of a validation set earlier in this chapter, we will not create one for this housing example.

Tip on why we will not use validation sets in this housing example!

Even though validation sets are an important part of a broader supervised learning framework, we will not use one in this chapter’s housing example or in the main worked examples that follow throughout the textbook. The reason is that our pedagogical focus is not on model selection across competing modelling specifications. Rather, we aim to show how a regression analysis proceeds through the data science workflow once an appropriate modelling strategy has already been identified for the response type and the inquiry of interest.

Accordingly, the housing example in this chapter uses a training set to support exploratory data analysis, estimation, and diagnostics, and a test set to support final assessment and, when relevant, protection against double dipping. If you would like to see the same housing dataset used in a fuller predictive workflow with an explicit validation set, a dedicated appendix will be provided later in ?sec-validation-workflow.

Now, the below codes do the following:

-

R: The code in Listing 1.1 executes an 80/20 random split of thehousing_datadataset using the {rsample} package (Frick et al. 2025). Theset.seed()function ensures reproducibility, whileinitial_split()partitions the data into training and testing subsets. The resulting split object is then passed totraining()andtesting()to extract the corresponding datasets. A sanity check follows, wheredim()andnrow()are used to inspect the shapes of each subset and to compute their observed proportions, confirming that the split aligns with the intended allocation. -

Python: The code in Listing 1.2 performs an analogous 80/20 partition ofhousing_datausingtrain_test_split()from {scikit-learn} (Pedregosa et al. 2011), withrandom_stateensuring reproducibility. The function returns the training and testing subsets directly. A subsequent sanity check uses.shapeandlen()to inspect the size of each subset and to verify the observed proportions of the split, ensuring that the partitioning matches the expected configuration before proceeding with further modelling steps. Note that we also use the {numpy} library (Harris et al. 2020).

Heads-up on the different training and testing sets obtained via R and Python!

It turns out that both the {rsample} package in R and {scikit-learn} in Python utilize different pseudo-random number generators. As a result, they produce different training and testing data splits, even when using the same seed values.

# Loading library

library(rsample)

# Seed for reproducibility

set.seed(123)

# Randomly splitting into training and testing sets

housing_data_splitting <- initial_split(housing_data,

prop = 0.2

)

# Assigning data points to training and testing sets

training_data <- training(housing_data_splitting)

testing_data <- testing(housing_data_splitting)

# Sanity checks

n_total <- nrow(housing_data)

n_train <- nrow(training_data)

n_test <- nrow(testing_data)

cat(sprintf(

"Training shape: %d %d\nTesting shape: %d %d\n\nTraining proportion: %.3f\nTesting proportion: %.3f\n",

nrow(training_data), ncol(training_data),

nrow(testing_data), ncol(testing_data),

n_train / n_total,

n_test / n_total

))Training shape: 400 5

Testing shape: 1600 5

Training proportion: 0.200

Testing proportion: 0.800# Importing libraries

from sklearn.model_selection import train_test_split

import numpy as np

# Seed for reproducibility

random_state = 123

# Randomly splitting into training and testing sets

training_data, testing_data = train_test_split(

housing_data,

test_size=0.8,

random_state=random_state

)

# Sanity checks

n_total = len(housing_data)

n_train = len(training_data)

n_test = len(testing_data)

print(

f"Training shape: {training_data.shape}\n"

f"Testing shape: {testing_data.shape}\n\n"

f"Training proportion: {n_train/n_total:.3f}\n"

f"Testing proportion: {n_test/n_total:.3f}"

)Training shape: (400, 5)

Testing shape: (1600, 5)

Training proportion: 0.200

Testing proportion: 0.800In addition, the code below displays the first 100 rows of our training data, which is a subset of size equal to 400 data points.

# Showing the first 100 houses of the training set

head(training_data, n = 100)# Showing the first 100 houses of the training set

print(training_data.head(100))Due to the use of different pseudo-random number generators for data splitting in R and Python, the training_data in the tables above differs. Now, let us make a necessary clarification about why we need to split the data in inferential inquiries.

Heads-up on data splitting for inferential inquiries!

In machine learning, data splitting is a foundational practice designed to prevent data leakage in predictive inquiries. However, you may wonder:

Why should we also split the data for inferential inquiries?

In the context of statistical inference, especially when making claims about population parameters, data splitting plays a different but important role: it helps prevent double dipping. Double dipping refers to the misuse of the same data for both exploring hypotheses (as in EDA) and formally testing those hypotheses. This practice undermines the validity of inferential claims by increasing the probability of Type I errors—incorrectly rejecting the null hypothesis \(H_0\) when it is actually true for the population under study.

To illustrate this, consider conducting a one-sample \(t\)-test in a double-dipping scenario for a population mean \(\mu\). Suppose we first observe a sample mean of \(\bar{x} = 9.5\) (i.e., an EDA summary statistic), and then decide to test the null hypothesis

\[\text{$H_0$: } \mu \geq 10\]

against the alternative hypothesis

\[\text{$H_1$: } \mu < 10,\]

after performing EDA on the same data. If we proceed with the formal \(t\)-test using that same data, we are essentially tailoring the hypothesis to fit our sample. Empirical simulations can show that such practices lead to inflated false positive rates, which threaten the reproducibility and integrity of statistical inference.

Unlike predictive modelling, data splitting is not a routine practice in statistical inference. However, it becomes relevant when the line between exploration and formal testing is blurred. For more information on double dipping in statistical inference, Chapter 6 of Reinhart (2015) offers in-depth insights and some real-life examples.

After classifying the variables and splitting our data, we will move on to coding the plots and calculating the summary statistics.

Heads-up on the use of R-generated training and testing for the rest of the data science workflow!

We have clarified that both R and Python produce different random data splits, even when using the same seeds. Therefore, in all the following Python code snippets related to this housing price case, we will be utilizing both the training and testing sets generated by the R-based data splitting. This approach ensures consistency in our coding outputs.

If you want to reproduce all these outputs in Python using Quarto (Allaire et al. 2025), while utilizing the R-generated sets, you can import these datasets from the R environment using the {reticulate} package (Ushey, Allaire, and Tang 2025).

As we move forward, we provide a list of plots and summary statistics, along with their corresponding EDA outputs and interpretations. This is based on our training data, which has a size of 400. Note that we are not providing the code to generate all of the EDA output directly (though you can find the R source here). However, subsequent chapters will include both R and Python code snippets to generate the corresponding EDA insights. Below is the list:

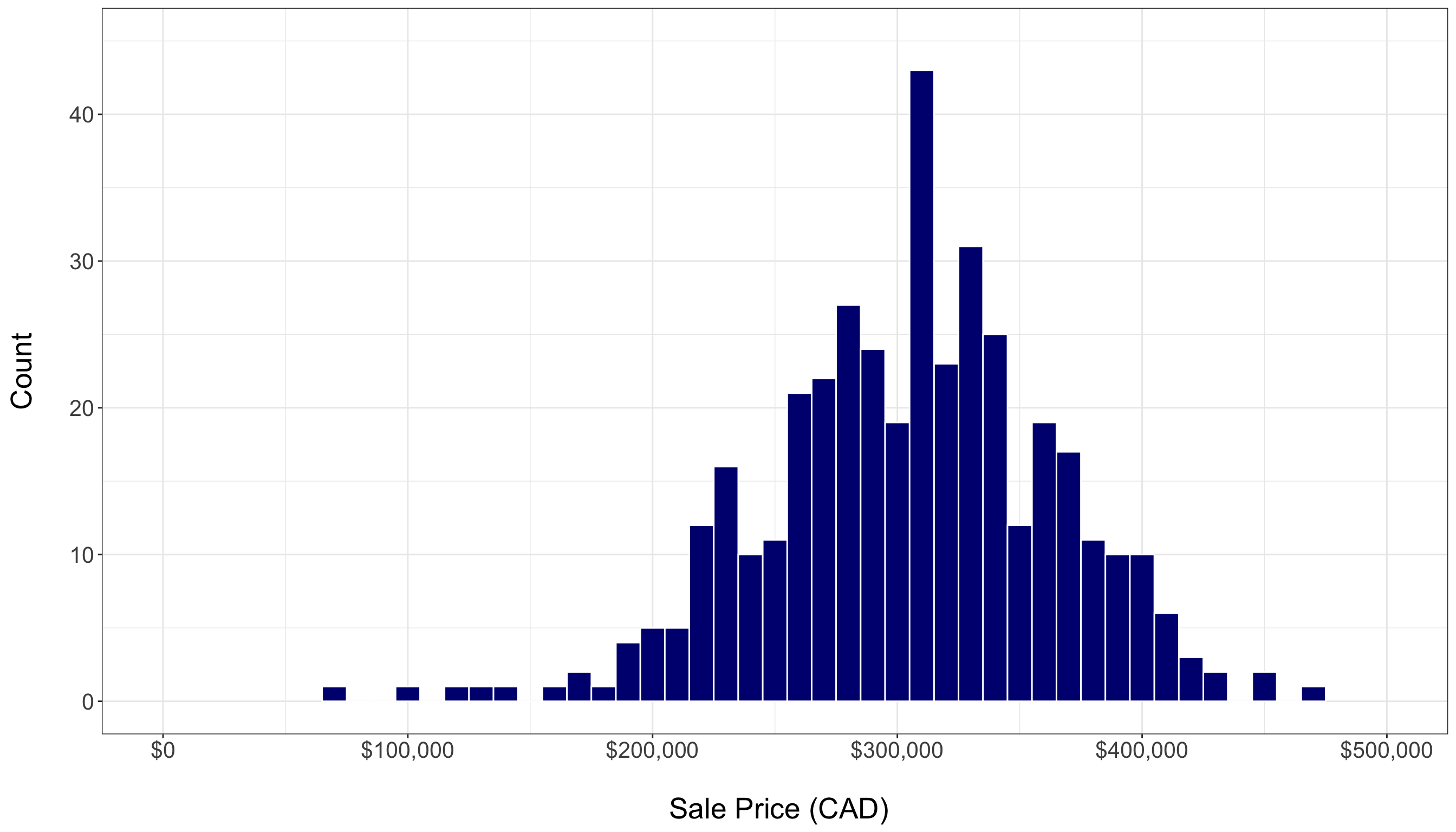

- A histogram of housing sale prices, as in Figure 1.5, shows the response’s distribution and helps identify any outliers. The training set reveals a fairly symmetric distribution of sale prices, with a noticeable concentration of sales between \(\$200,000\) and \(\$400,000\). However, there are a few outliers. Even with just 20% of the total data, this plot provides valuable graphical insights into central tendency and variability.

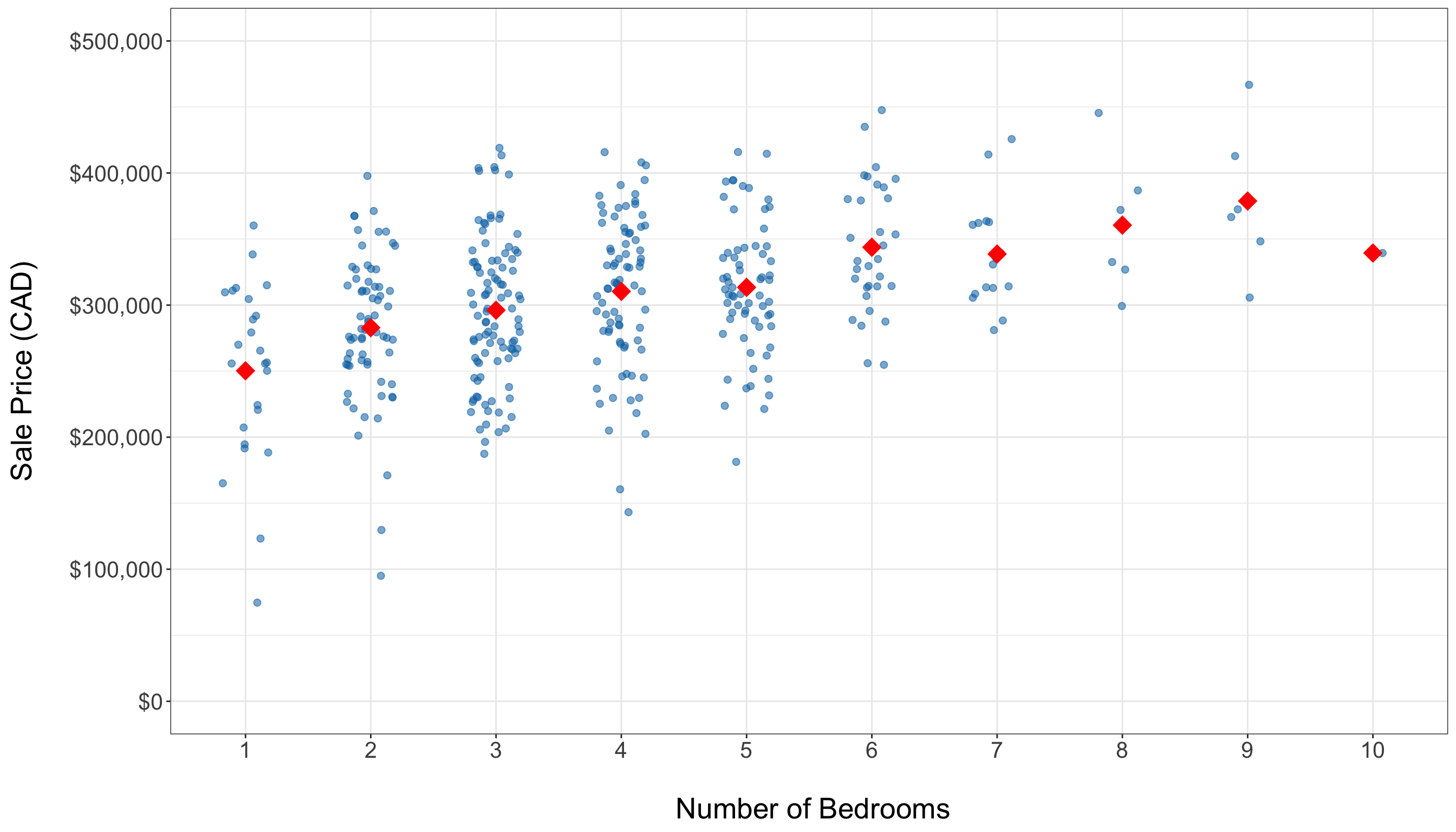

- Side-by-side jitter plots, as in Figure 1.6, visualize the distribution of sale prices across different bedroom counts, highlighting spread. Overall, these plots indicate a positive association between the number of bedrooms and housing sale price. Note that the average price (represented by red diamonds) tends to increase with the addition of more bedrooms. The training set predominantly has homes with 3 to 5 bedrooms, and there are some high-priced outliers present even among mid-sized homes.

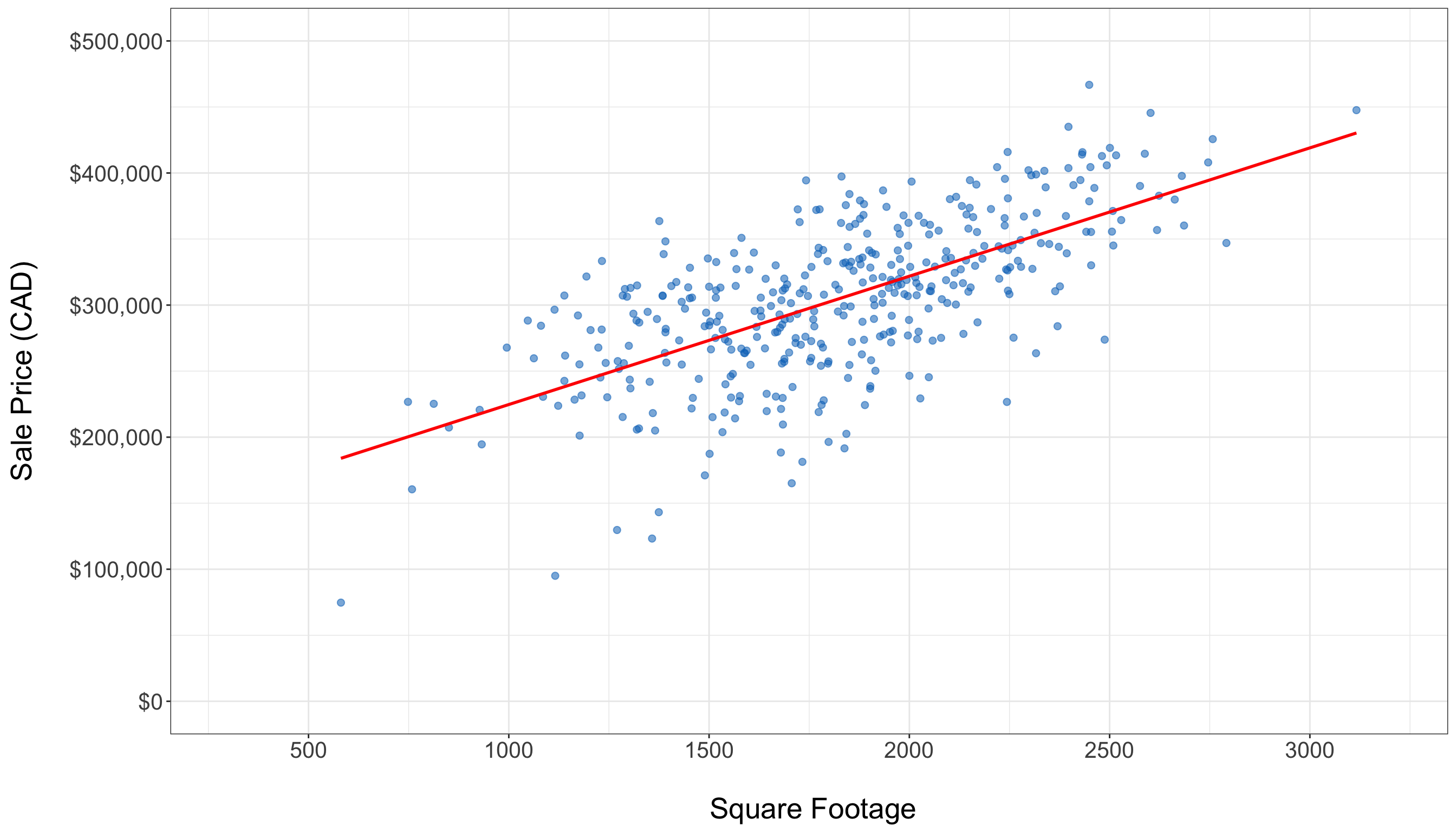

- A scatter plot displaying the relationship between square footage and housing sale price, as in Figure 1.7, illustrates how these two continuous variables interact. There is a clear upward trend in the training data, indicated by the fitted solid red line of the simple linear regression (which is a preliminary regression fit used by different plotting tools in

RorPython, via the model from Chapter 3). Although the variability increases with larger square footage, the overall positive linear pattern is still clear.

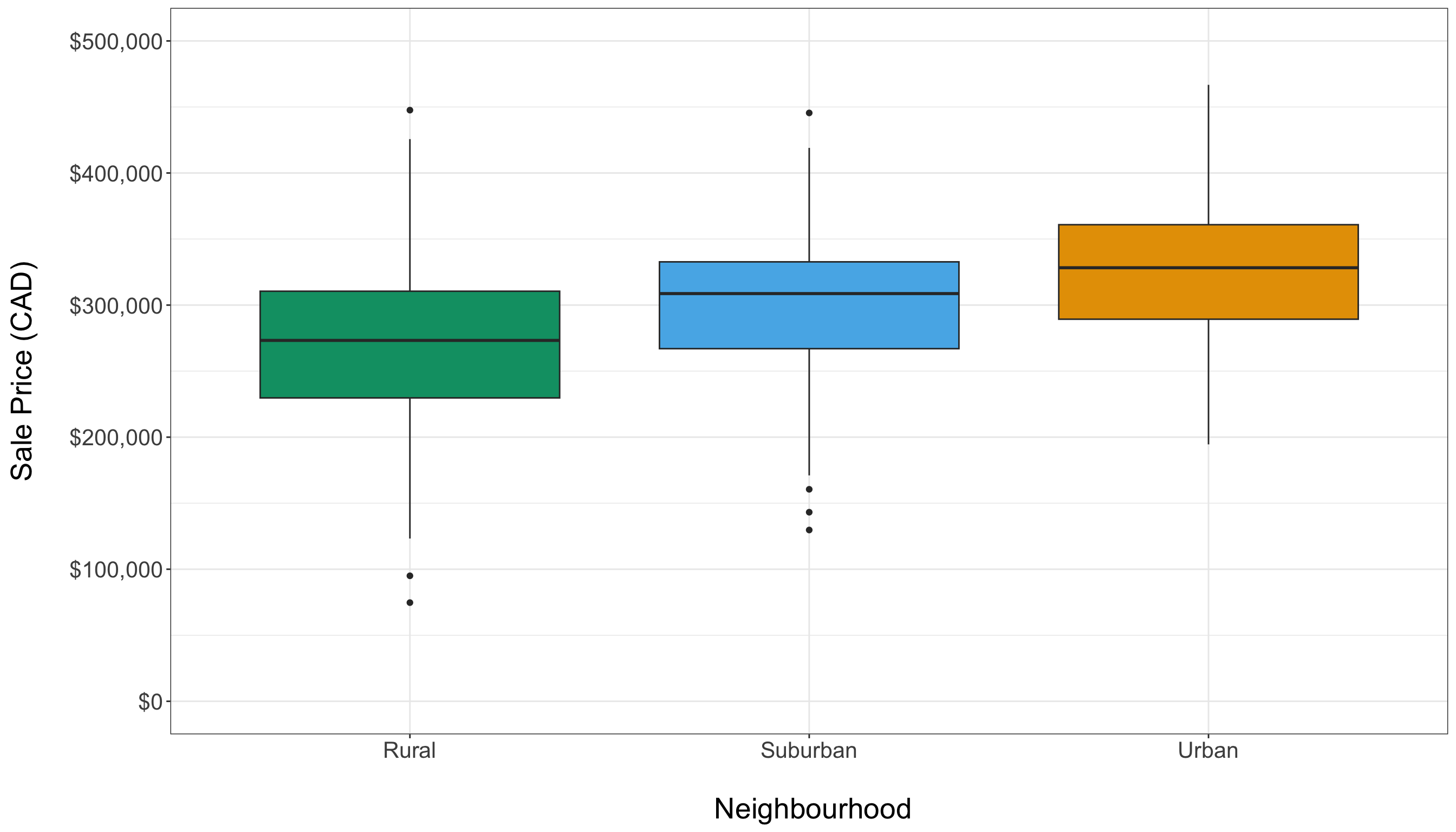

- Side-by-side box plots, as in Figure 1.8, are used to compare housing sale prices across different types of neighbourhoods, highlighting variations in median prices. The training data reveals neighbourhood-specific price patterns: urban homes tend to have higher prices, while rural homes are generally less expensive. However, from a graphical perspective, we do not observe major differences in price spreads between these types of neighbourhoods.

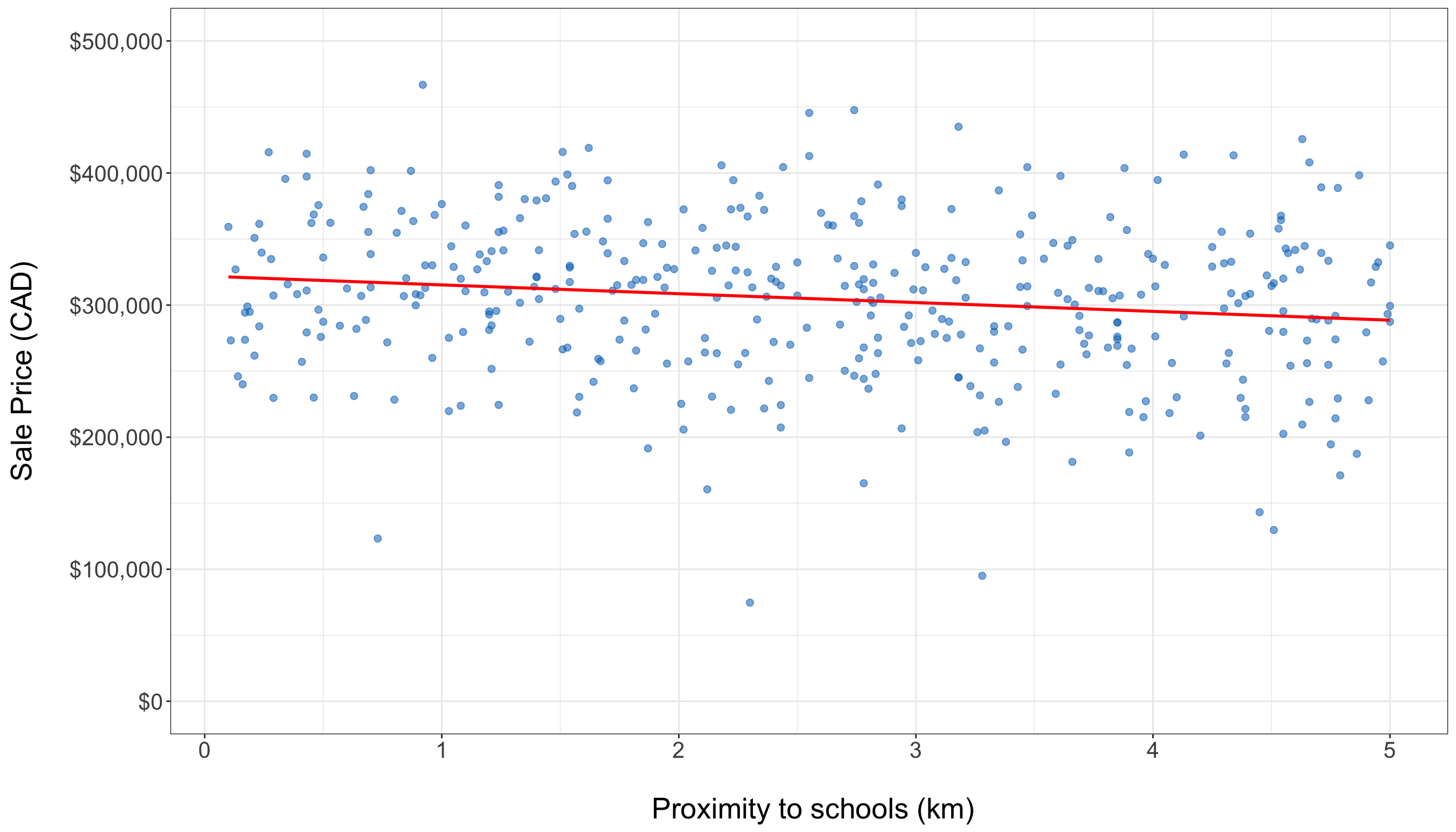

- The scatter plot showing the relationship between proximity to schools and housing sale price, as in Figure 1.9, reveals an almost flat trend in the training data. This observation is supported by the fitted solid red line of the simple linear regression (same model from Chapter 3), indicating a weak graphical relationship between these two variables.

- Descriptive statistics from Table 1.6, such as the mean and standard deviation, summarize continuous variables. In addition, a Pearson correlation matrix from Table 1.7 numerically assesses the relationships between these variables. Note that square footage is positively correlated with housing sale price, while proximity to schools has a negative association.

In displaying and interpreting results, the plots and statistics will guide us in understanding the data. In this specific example, these exploratory insights help identify key factors, such as square footage and neighbourhood type, that influence housing sale prices. They also highlight any outliers that may need further attention during modelling. By following this EDA process, we will establish a solid descriptive foundation for effective data modelling, ensuring that the key variables and their relationships are well understood.

1.4.4 Data Modelling

The previous EDA provides a solid descriptive foundation regarding the identified types of data for our response variable and regressors, as well as their graphical relationships. This information will guide us in selecting a suitable regression model based on the following factors:

- The response type (e.g., whether it is continuous, bounded or unbounded, count, binary, categorical, etc.).

- The flexibility of the chosen model (e.g., its ability to handle extreme values or outliers).

- Its interpretability (i.e., can we effectively communicate our statistical findings to stakeholders?).

In statistical literature, we often encounter classical linear regression models, such as the OLS model discussed in Chapter 3. This model enables us to explain our continuous response variable of interest, denoted as a random variable \(Y\), in the form of a linear combination of a specified set of regressors (the observed \(x\) variables). A linear combination is essentially an additive relationship where \(Y\) depends on the \(x\) variables, which are multiplied by regression coefficients. Alternatively, for both continuous and discrete response variables, we can utilize more complex models that establish a non-linear relationship between \(Y\) and the \(x\) variables. Some of these models are referred to as generalized linear models (GLMs).

For this workflow stage, whether using a classical linear regression model like OLS or a more complex one such as a GLM (a type of model that is covered in this book along other models that explain survival time responses), we need to establish modelling equations that align with both theoretical and data-driven considerations. These modelling equations will need definitions for the parameters, link functions (if applicable as in the case of GLMs), and any relevant distributional assumptions based on the chosen model. Then, once we have defined our modelling equation(s), we can proceed to the estimation stage. Note that this data modelling stage is iterative, as illustrated in Figure 1.10. The process will depend heavily on the results obtained during the goodness-of-fit stage.

Example: OLS Regression Model for Housing Data

Let us continue with our housing example, where our response of interest is the sale price of a house in CAD, as shown in Table 1.1. During the study design stage outlined in Section 1.4.1, we identified two key inquiries: inferential and predictive. The inferential inquiry focuses on understanding the statistical associations between the sale price and other variables, such as square footage, number of bedrooms, and proximity to schools. In contrast, the predictive inquiry involves fitting a suitable model to obtain estimates that will enable us to predict housing sale prices based on these same features.

Before selecting a model, we need to define our mathematical notation for all the variables involved. Let \(Y_i\) represent the continuous sale price of the \(i\)th house in CAD from a dataset of size \(n\) used to estimate a chosen model in general, where \(i = 1, 2, \ldots, n\). For the observed explanatory variables, we define the following:

- \(x_{i, 1}\) is the number of bedrooms in the \(i\)th house, which is a count-type variable.

- \(x_{i, 2}\) is the continuous square footage of the \(i\)th house.

- \(x_{i, 3}\) is the continuous proximity to schools for the \(i\)th house in km.

To mathematically represent the categorical and nominal neighbourhood types to which the \(i\)th house could belong, we need more than one variable \(x\). In regression analysis involving nominal explanatory variables, we typically use binary dummy variables. In this example, these dummy variables will help us identify the neighbourhood type of each house. Generally, for a nominal variable with \(u\) categories, we need to define \(u - 1\) dummy variables, as shown in Table 1.8.

| Level | \(x_{i, 1}\) | \(x_{i, 2}\) | \(\cdots\) | \(x_{i, u - 1}\) |

|---|---|---|---|---|

| \(1\) | \(0\) | \(0\) | \(\cdots\) | \(0\) |

| \(2\) | \(1\) | \(0\) | \(\cdots\) | \(0\) |

| \(\vdots\) | \(\vdots\) | \(\vdots\) | \(\ddots\) | \(\vdots\) |

| \(u\) | \(0\) | \(0\) | \(\cdots\) | \(1\) |

Heads-up on how to use dummy variables!

In Table 1.8, note that level \(1\) is considered the baseline (reference) level. If the \(i\)th observation belongs to level \(1\), then all the dummy variables \(x_{i, 1}, \ldots, x_{i, u - 1}\) will take the value of \(0\). The choice of baseline affects how we interpret the estimated regression coefficients later in our data science workflow.

Table 1.9 shows the dummy variable arrangement for our housing example regarding the neighbourhood type where rural is the baseline level. Since we have three levels (rural, suburban, and urban), our chosen model will have two binary dummy variables for the \(i\)th house:

\[ x_{i, 4} = \begin{cases} 1 \quad \text{if the house belongs to a suburban neighbourhood},\\ 0 \quad \text{otherwise}; \end{cases} \tag{1.2}\]

and

\[ x_{i, 5} = \begin{cases} 1 \quad \text{if the house belongs to an urban neighbourhood},\\ 0 \quad \text{otherwise}. \end{cases} \tag{1.3}\]

| Level | \(x_{i,4}\) | \(x_{i,5}\) |

|---|---|---|

| \(\text{Rural}\) | \(0\) | \(0\) |

| \(\text{Suburban}\) | \(1\) | \(0\) |

| \(\text{Urban}\) | \(0\) | \(1\) |

With the mathematical notation for our data variables defined, it is time to choose a suitable regression model to address our inferential and predictive inquiries. Since the nature of \(Y_i\) is continuous, we may consider using OLS regression, as outlined in Chapter 3, although there is an important distributional matter to be highlighted at the end of this section. OLS is typically the first regression model to explore because it is a widely used model that is easy to understand and communicate to stakeholders. We refer to OLS as a parametric model, a distinction that other models, such as the GLMs, also have. Let us define this type of model below.

Definition of parametric model

A parametric model is a type of model that assumes a specific functional relationship between the response variable of interest, \(Y\), which is considered a random variable, and one or more observed explanatory variables, \(x\). This relationship is characterized by a finite set of parameters and can often be expressed as a linear combination of the observed \(x\) variables, which favours interpretability.

Moreover, since \(Y\) is a random variable, there is room to make further assumptions on it in the form of a probability distribution, independence or even homoscedasticity (the condition where all responses in the population have the same variance). It is essential to test these assumptions after fitting this type of models, as any deviations may result in misleading or biased estimates, predictions, and inferential conclusions.

A parametric model, as previously mentioned, allows us to prioritize interpretability in our regression analysis, and OLS offers this advantageous characteristic. The classical setup of OLS describes the relationship between the response variable \(Y\) and the observed variables \(x\) as a linear combination, represented by the following equation for \(i = 1, 2, \ldots, n\) in this housing price example:

\[ Y_i = \underbrace{\beta_0 + \beta_1 x_{i, 1} + \beta_2 x_{i, 2} + \beta_3 x_{i, 3} + \beta_4 x_{i, 4} + \beta_5 x_{i, 5}}_{\text{Systematic Component}} + \underbrace{\varepsilon_i.}_{\substack{\text{Random} \\ \text{Component}}} \tag{1.4}\]

Equation 1.4 indicates two important components in this regression model on its righ-hand side:

- Systematic Component: This component includes six fixed and unknown regression parameters (\(\beta_0\), \(\beta_1\), \(\beta_2\), \(\beta_3\), \(\beta_4\), and \(\beta_5\)) that we will estimate in the next stage using our training data. Note that this component represents the expected value of the response variable \(Y\), conditioned on the observed values of the regressors and it is also the result of the assumptions on the random component below:

\[ \begin{align*} \mathbb{E}(Y_i \mid x_{i, 1}, \ldots, x_{i, 5}) &= \beta_0 + \beta_1 x_{i, 1} + \beta_2 x_{i, 2} + \\ & \qquad \beta_3 x_{i, 3} + \beta_4 x_{i, 4} + \beta_5 x_{i, 5}. \end{align*} \tag{1.5}\]

- Random Component: For the \(i\)th observation, this is denoted by the random variable \(\varepsilon_i\). This component measures how much the observed value of the response may deviate from its conditioned mean, and it is considered random noise. Since \(\varepsilon_i\) is assumed to be a random variable and is added to a fixed systematic component on the right-hand side of Equation 1.4, this aligns with the notion that \(Y_i\) is treated as a random variable on the left-hand side.

We also need to state the modelling assumptions for this OLS case:

- Each observed regressor on the right-hand side of the Equation 1.4 has an associated regression coefficient \(\beta_j\) for \(j = 1, 2, \ldots, 5\) (these were already indicated as part of the regression parameters). These coefficients represent the expected change in the response variable when a specific regressor \(x_{i,j}\) changes by one unit. Additionally, the regression parameter \(\beta_0\) serves as the intercept of this linear model, representing the mean of the response when all five regressors are equal to zero. This entire arrangement allows for a more interpretable model and aids in addressing our inferential inquiry.

- To pave the way for the corresponding inferential test in OLS, the error term \(\varepsilon_i\) is typically assumed to be normally distributed with a mean of zero (this mean is consistent with the conditioned expected value outlined in Equation 1.5). Additionally, it is assumed that the variance is constant across observations, referred to as the so-called homoscedasticity, and denoted as \(\sigma^2\) (another regression parameter fixed and unknown to estimate via the training set). Furthermore, all error terms \(\varepsilon_i\) are assumed to be statistically independent. These assumptions can be mathematically represented as follows:

\[ \begin{gather*} \mathbb{E}(\varepsilon_i) = 0 \\ \text{Var}(\varepsilon_i) = \sigma^2 \\ \varepsilon_i \sim \text{Normal}(0, \sigma^2) \\ \varepsilon_i \perp \!\!\! \perp \varepsilon_k \; \; \; \; \text{for} \; i \neq k \; \; \; \; \text{(independence)}. \end{gather*} \]

Heads-up on the use of an alternative systematic component!

The systematic component in Equation 1.4 is considered linear with respect to the regression parameters \(\beta_1\), \(\beta_2\), \(\beta_3\), \(\beta_4\), and \(\beta_5\). Therefore, we can model the regressors using mathematical transformations, such as the following polynomial:

\[ Y_i = \beta_0 + \beta_1 x_{i, 1} + \beta_2 x_{i, 2}^2 + \beta_3 x_{i, 3}^3 + \beta_4 x_{i, 4} + \beta_5 x_{i, 5} + \varepsilon_i. \]

This linearity condition on the parameters makes our OLS model flexible enough to improve accuracy in predictive inquiries. However, we would sacrifice some interpretability for inferential inquiries.

Before we conclude this stage, note that Chapter 2 will explore the fundamentals of probability and statistical inference in greater depth. This exploration will enhance our understanding of the modelling assumptions underlying the regression models discussed throughout this book. Additionally, we will broaden our perspective on regression to consider more appropriate models for nonnegative responses, instead of relying on OLS with the assumption of an unbounded, normally distributed response which might be unrealistic for nonnegative housing prices (and still a mild violation on our response assumptions, given that the housing prices appear to have a bell-shaped distribution as shown in Figure 1.5).

1.4.5 Estimation

Based on the data we have and our EDA, defining a suitable regression model (along with the equations that relate the response variable \(Y\) to the regressors \(x\) and the corresponding regression parameters) is an essential step in our data science workflow. This leads us to the next stage: estimation. In this stage, we aim to obtain what we refer to as modelling estimates using our training dataset. The method we choose for estimation largely depends on the specific regression model we adopt to address our inquiries.

In all core chapters of this book, except for Chapter 3, the default method we will use is maximum likelihood estimation (MLE) (the fundamental insights are provided in Section 2.2). Regardless of the chosen estimation method, these estimates (denoted with a hat notation) will allow us to quantify the association (or causation, if applicable) between the outcome variable \(Y\) and the \(x\) regressors. This is particularly relevant in inferential inquiries, provided that the results are statistically significant, as discussed in Section 1.4.7.

As illustrated in Figure 1.11, the data modelling stage will yield the necessary components for this phase in the form of a suitable model, modelling equation, and regression parameters. We will then use the corresponding R or Python fitting function, where the inputs will include the coded modelling equation (which contains the variables of interest: the outcome and the regressors) along with the training set. These fitting functions serve the following purposes:

- In most regression models, obtaining analytical (i.e., exact) solutions for our parameter estimates is not feasible. Specifically, MLE can employ an optimization method such as Newton-Raphson or iteratively reweighted least-squares (IRLS), as we aim to maximize the log-likelihood function that involves our observed data and unknown parameters. This function is numerically optimized to estimate these parameters. More information regarding numerical optimization in MLE, including a brief discussion of the Newton-Raphson method, can be found in Section 2.2.3. Throughout the core chapters of the book, we will delve deeper into the fundamentals of IRLS.

- Once the estimation process has been completed using the appropriate log-likelihood function and numerical optimization method (i.e., when the method has converged to an optimal solution), we will obtain outputs that include parameter estimates. These parameter estimates will be used in the subsequent workflow stage, called goodness of fit, to statistically assess whether our fitted model satisfies the assumptions we made about our data in the previous modelling stage.

Example: Fitting the OLS Regression Model for Housing Data

Let us examine the training_data for this housing case, which consists of 400 observations. As shown in Table 1.1, for the \(i\)th house, we have different regressors: the number of bedrooms (bedrooms, denoted as \(x_{i, 1}\)), the continuous square footage (sqft, denoted as \(x_{i, 2}\)), the continuous proximity to schools in km (school_distance, denoted as \(x_{i, 3}\)), and neighborhood type (represented by dummy variables \(x_{i, 4}\) as in Equation 1.2 and \(x_{i, 5}\) as in Equation 1.3, where rural is the baseline). The response variable we are interested in is the continuous housing sale price in CAD (sale price, denoted as \(Y_i\)). Additionally, we will revisit our modelling approach as outlined in Equation 1.4:

\[ Y_i = \beta_0 + \beta_1 x_{i, 1} + \beta_2 x_{i, 2} + \beta_3 x_{i, 3} + \beta_4 x_{i, 4} + \beta_5 x_{i, 5} + \varepsilon_i, \]

where \(\beta_0\), \(\beta_1\), \(\beta_2\), \(\beta_3\), \(\beta_4\), and \(\beta_5\) represent the unknown regression parameters to be estimated to address our inferential and predictive inquiries. Additionally, we have another parameter to estimate, that is the common variance between the random components of each observation (\(i = 1, 2, \ldots, n\)):

\[ \text{Var}(\varepsilon_i) = \sigma^2. \]

Having set up the coding starting point for this estimation, we need to use the corresponding fitting functions to find \(\hat{\beta}_0\), \(\hat{\beta}_1\), \(\hat{\beta}_2\), \(\hat{\beta}_3\), \(\hat{\beta}_4\), \(\hat{\beta}_5\), \(\hat{\sigma}^2\) via OLS regression (as we already decided in the data modelling stage). Therefore, let us the following function and libraries:

-

R: We fit an OLS model via thelm()function with the responsesale_priceand four explanatory variables:bedrooms,sqft,school_distance, andneighbourhoodvia thetraining_data. The resulting model object, stored intraining_OLS_model, contains the estimated parameters and related statistics (which will be explained in the results stage via the testing set). The output shows the systematic component used (i.e.,formula) along with the estimated regression parameters \(\hat{\beta}_0, \hat{\beta}_1, \dots, \hat{\beta}_5\). -

Python: This code fits the same OLS model using the {statsmodels} (Seabold and Perktold 2010) library. It begins by specifying and fitting this OLS model throughsmf.ols(), which regressessale_pricebased on four explanatory variables:bedrooms,sqft,school_distance, andneighbourhoodvia thetraining_data. The.fit()method estimates the regression coefficients and computes related statistics. Then, we print the estimated regression parameters \(\hat{\beta}_0, \hat{\beta}_1, \dots, \hat{\beta}_5\).

# To import R-generated datasets to Python environment

library(reticulate)

# Fitting the OLS model

training_OLS_model <- lm(

formula = sale_price ~ bedrooms + sqft +

school_distance + neighbourhood,

data = training_data

)

training_OLS_model

Call:

lm(formula = sale_price ~ bedrooms + sqft + school_distance +

neighbourhood, data = training_data)

Coefficients:

(Intercept) bedrooms sqft

53948.21 14107.19 99.01

school_distance neighbourhoodSuburban neighbourhoodUrban

-6963.93 26908.04 60509.46 # Importing libraries

import statsmodels.formula.api as smf

# Importing R-generated training set via R library reticulate

training_data = r.training_data

# Fitting the OLS model

training_OLS_model = smf.ols(

formula="sale_price ~ bedrooms + sqft + school_distance + neighbourhood",

data=training_data

).fit()

training_OLS_model.paramsIntercept 53948.206229

neighbourhood[T.Suburban] 26908.043443

neighbourhood[T.Urban] 60509.459093

bedrooms 14107.194703

sqft 99.006944

school_distance -6963.930281

dtype: float64Heads-up on the OLS analytical estimates!

Since the inferential inquiry in this example aims to understand the statistical associations between housing sale prices and their explanatory variables, while the predictive inquiry seeks to fit a suitable model that allows us to predict housing sale prices based on these same features, we might be tempted to use training_OLS_model to address both inquiries right away. However, according to our data science workflow, these analyses should be conducted during the results stage, after we have completed model diagnostic checks. For now, we will use the training_data and training_OLS_model to perform this corresponding goodness of fit in the next stage.

1.4.6 Goodness of Fit

Once we have estimated our regression model on the training data, we move into goodness of fit. Think of this stage as quality control for the model we just trained: we check whether the fitted model behaves the way our data modelling stage said it should. That matters because every model we write down is an abstraction of reality: a simplified story about how the observed data could plausibly be generated in the population or system we care about. In regression analysis, that story is encoded by a specific modelling choice like OLS (or a GLM, for instance), together with its parametrization and distributional assumptions. Goodness-of-fit checks help us answer a practical question:

Is this modelling story compatible with what we actually see in the data and enough that we can trust the inferences and predictions we plan to report?